Explains MCP—an open standard that enables stateful, secure AI-tool integrations, simplifies discovery, and reduces vendor lock-in.

MCP, or Model Context Protocol, is an open standard that simplifies how AI systems connect with tools like databases, APIs, and file systems. Think of it as the USB-C for AI - it replaces the need for custom integrations by providing a universal interface. Launched in November 2024 by Anthropic and now managed by the Linux Foundation, MCP is widely adopted by major tech companies like OpenAI, Google, and Microsoft.

Here’s why it matters:

- Standardization: Instead of building custom integrations for each AI model and tool, MCP reduces the complexity from N × M integrations to N + M. Developers create one server per tool, compatible with all MCP-supported systems.

- Stateful Interactions: Unlike traditional function calls or REST APIs, MCP supports ongoing, two-way communication, maintaining context across sessions.

- Rapid Growth: By early 2026, MCP has over 20,000 servers, 97 million monthly SDK downloads, and adoption by 28% of Fortune 500 companies.

- Security: MCP includes features like user approval, OAuth 2.1, and sandboxing for safe and controlled interactions.

MCP is especially useful for AI agents requiring dynamic tool discovery, multi-step workflows, or integration with multiple AI providers. It’s transforming how developers build and scale AI systems while reducing vendor lock-in and improving efficiency.

In this guide, you’ll learn how MCP works, how to build your first server, and how it compares to alternatives like REST APIs and function calling.

Model Context Protocol (MCP) explained (with code examples)

sbb-itb-bfaad5b

What Is MCP and What Problem Does It Solve?

Model Context Protocol (MCP) is an open standard designed to simplify how AI applications (clients) interact with external tools and data sources (servers). Instead of requiring custom integrations for every AI model and resource combination, MCP provides a single, standardized interface. This allows AI systems to work seamlessly with databases, file systems, APIs, and other tools. Let's dive into the key challenges that MCP addresses.

MCP leverages JSON-RPC 2.0, enabling a dynamic handshake where servers advertise their tools and data capabilities to clients. This eliminates the need for manual setup and configuration.

The Problem: Connecting AI to Tools Before MCP

Before MCP, developers faced the "N × M integration problem." Imagine having N different AI models and M different AI tools - each combination required its own custom integration. For instance, switching from Claude to GPT-4 meant reworking the entire integration layer, which was both time-consuming and error-prone.

Adding to the complexity, each AI framework had its own proprietary tool-calling formats. OpenAI, Anthropic, and LangChain all used different specifications. This lack of standardization led to fragmented systems that were difficult to maintain. Moreover, communication was often one-way: the AI would make a request, receive a response, and stop there. There was no way to maintain context or state across multiple interactions, making it hard to create advanced workflows.

How MCP Works

MCP simplifies the integration process by reducing the problem from N × M to N + M. Developers only need to build one server per resource (like GitHub or PostgreSQL), and it will work with all MCP-compatible clients, such as Claude Desktop, Cursor, or VS Code. This approach supports stateful, bidirectional sessions, which means the AI and tools can maintain an ongoing connection - critical for workflows that require context across multiple steps.

MCP uses two transport layers for communication:

- Stdio (standard input/output): Ideal for local development and IDE tools, requiring no network setup and ensuring process isolation.

- Streamable HTTP: Replacing the older Server-Sent Events (SSE) in the March 2025 update, this method offers improved reliability for production environments.

MCP servers provide three main building blocks:

- Tools: Actions the AI can perform, such as

query_databaseorsend_email. - Resources: Read-only data that provides context, like file contents or API documentation.

- Prompts: Reusable templates that guide the AI in using tools effectively.

MCP Architecture: Clients, Servers, and Components

MCP operates on a three-layer architecture designed to separate responsibilities and allow seamless integration of AI tools. At the top is the Host, which is the primary AI application (examples include Claude Desktop, Cursor AI, or VS Code) responsible for managing the user interface and overall experience. Within the host, a Client establishes a dedicated, stateful session with an MCP server. The Server, on the other hand, provides the capabilities - such as tools, resources, and prompts - that the client can access. This architecture has led to rapid growth, with thousands of servers now available and becoming widely adopted across enterprises. This layered setup ensures efficient and adaptable integration of tools into various AI platforms.

Clients, Servers, and Tools

When an AI host like Claude Desktop connects to a GitHub server, the client begins by negotiating protocol versions and capabilities through an initialize request. This request uses JSON-RPC 2.0 message formatting to establish compatibility. The server responds with its available features, which may include tools, resource subscriptions, or sampling options.

Tools serve as the action layer within MCP. These are predefined functions, described using JSON Schemas, that the AI model can activate based on user intent. Examples of such tools might include query_database or send_email. During the initial handshake, the AI reads the tool descriptions and selects the best tool for the task, depending on the context of the conversation.

Messages between the client and server are transmitted using two primary methods: stdio for local subprocess communication and Streamable HTTP for remote deployments. This dual mechanism supports a variety of deployment scenarios, ensuring flexibility in how MCP operates.

Beyond executing actions, MCP also manages critical context and data through resources and prompts, enhancing the AI's ability to provide accurate and relevant responses.

Resources, Prompts, and Context

Resources are read-only datasets identified by URIs, such as file://config.json or db://schema. Unlike tools, resources are controlled by the host, which decides when to fetch and provide this contextual information to the AI model during a conversation.

Prompts are reusable templates with dynamic arguments that simplify complex workflows. They act as shortcuts for repetitive tasks, like reviewing code or summarizing logs, ensuring consistency and efficiency in operations.

MCP maintains context through persistent, two-way connections. This allows the model to reference previous JSON results, track specific IDs for follow-up interactions, and maintain awareness over extended conversations. Additionally, clients can subscribe to resources, enabling real-time updates when data changes, which helps keep the AI's context up-to-date.

Security is a core aspect of the MCP design. Servers operate independently, meaning they cannot access other server connections or the full conversation history unless the host explicitly shares it. Furthermore, tool executions typically require user approval, reinforcing a human-in-the-loop approach that helps prevent unauthorized actions or misuse of tools.

Build Your First MCP Server: Step-by-Step Tutorial

Want to create your own MCP server? This guide will walk you through building a simple file system server that allows AI assistants to read files on your machine. It’s a great starting point to understand how MCP connects AI models to practical tools. This integration is becoming a staple among developer advocacy tools for building community-driven AI extensions.

Setting Up Your Development Environment

First, decide whether to use TypeScript (requires Node.js 18+) or Python (requires version 3.10+). Depending on your choice, install the necessary libraries:

-

For TypeScript:

Thenpm install @modelcontextprotocol/sdk zodzodlibrary is essential for schema validation so the AI can interpret tool parameters. -

For Python:

Alternatively, you can install thepip install mcpfastmcpframework for quicker development.

If you’re using TypeScript, make these adjustments:

- Add

"type": "module"in yourpackage.json. - Set

moduleandmoduleResolutiontoNode16orNodeNextintsconfig.json.

For Python users, no extra configuration is needed.

Important: Always send diagnostics to stderr. Keep stdout reserved for JSON-RPC messages - any extra output on stdout can cause your server to crash.

Creating a File System Server

Here’s an example of a TypeScript server that includes a read_file tool:

import { Server } from "@modelcontextprotocol/sdk/server/index.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

import { z } from "zod";

import fs from "fs/promises";

import path from "path";

const server = new Server(

{ name: "file-system-server", version: "1.0.0" },

{ capabilities: { tools: {} } }

);

server.setRequestHandler("tools/list", async () => ({

tools: [

{

name: "read_file",

description: "Read the complete contents of a text file",

inputSchema: z.object({

path: z.string().describe("Absolute path to the file")

})

}

]

}));

server.setRequestHandler("tools/call", async (request) => {

if (request.params.name === "read_file") {

const filePath = path.resolve(request.params.arguments.path);

const content = await fs.readFile(filePath, "utf-8");

return { content: [{ type: "text", text: content }] };

}

throw new Error("Unknown tool");

});

const transport = new StdioServerTransport();

await server.connect(transport);

If you prefer Python, the fastmcp example is even simpler:

from mcp.server import Server

from pathlib import Path

app = Server("file-system-server")

@app.tool()

def read_file(path: str) -> str:

"""Read the complete contents of a text file"""

return Path(path).read_text()

if __name__ == "__main__":

import mcp

mcp.run(transport="stdio")

Use .describe() in Zod schemas or Python docstrings to provide clear explanations for parameters. This helps the AI understand how to use your tools effectively.

Once your server is ready, you can test its functionality with an MCP client.

Testing Your Server with an AI Client

Before connecting your server to tools like Claude or Cursor, test it using MCP Inspector, a browser-based tool for interactive JSON-RPC calls. Run the following command:

npx @modelcontextprotocol/inspector

This tool allows you to send test calls and inspect raw JSON-RPC messages without needing to restart your AI client.

To connect your server to Claude Desktop, update the claude_desktop_config.json file. This file is located at:

~/Library/Application Support/Claude/(macOS)%APPDATA%\Claude\(Windows)~/.config/Claude/(Linux)

Add your server configuration like so:

{

"mcpServers": {

"file-system": {

"command": "node",

"args": ["/absolute/path/to/dist/index.js"]

}

}

}

Restart Claude Desktop completely. If the connection is successful, you’ll notice a hammer icon in the chat input area, listing your available tools.

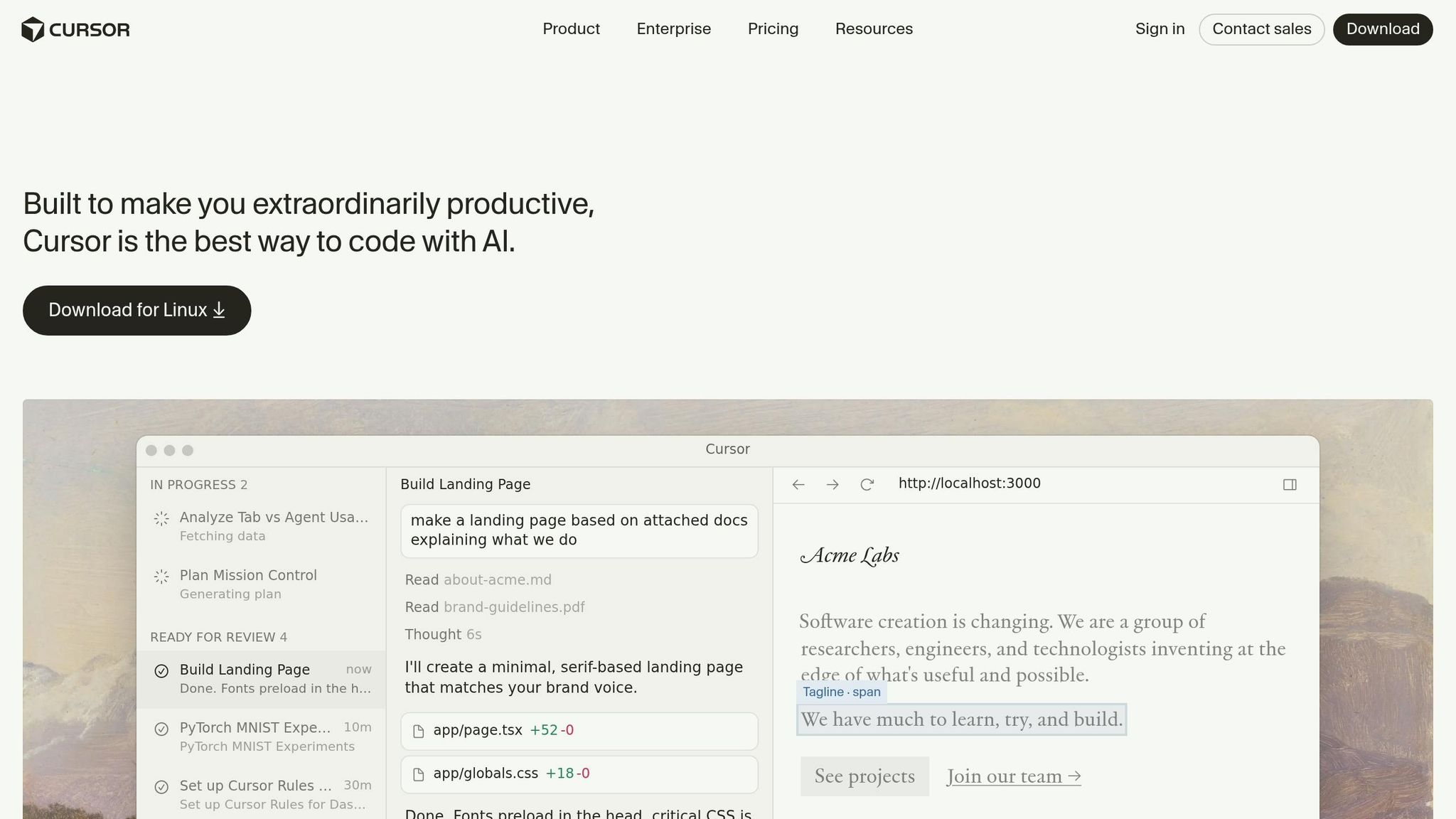

For Cursor IDE, navigate to Settings > MCP or create a .cursor/mcp.json file in your project root.

Tip: Always use absolute paths in configuration files to avoid loading errors. If something goes wrong, check the client logs for details:

- macOS:

~/Library/Logs/Claude/mcp*.log

Testing with MCP Inspector ensures your server is ready for use with production tools like Claude Desktop and Cursor.

Using MCP with Claude, Cursor, and Other AI Tools

You can connect your MCP server to various AI tools, as most major AI development platforms now support MCP with similar setup processes.

Connecting MCP to Claude

Claude Desktop uses a single JSON configuration file for setup. The location of this file depends on your operating system:

| Operating System | Configuration File Path |

|---|---|

| macOS | ~/Library/Application Support/Claude/claude_desktop_config.json |

| Windows | %APPDATA%\Claude\claude_desktop_config.json |

| Linux | ~/.config/Claude/claude_desktop_config.json |

To connect a server, open the configuration file and add it under the mcpServers object. For instance, to integrate a GitHub server, your configuration might look like this:

{

"mcpServers": {

"github": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {

"GITHUB_TOKEN": "${GITHUB_TOKEN}"

}

}

}

}

Security Tip: Avoid hardcoding API keys in the configuration file. Use the "${VARIABLE_NAME}" syntax to refer to environment variables, which Claude resolves during startup.

After saving the file, restart Claude Desktop completely. If the connection is successful, a hammer icon will appear in the chat input area, allowing you to access tools from connected servers.

If troubleshooting is needed, check logs at ~/Library/Logs/Claude/mcp*.log on macOS or %APPDATA%\Claude\logs\mcp*.log on Windows.

For users of Claude Code CLI, you can add servers using this command:

claude mcp add github -- npx -y @modelcontextprotocol/server-github

This updates the ~/.claude/settings.json file automatically. For project-specific tools, create a .mcp.json file in the project root, enabling team-wide tool sharing via version control.

Once Claude is set up, you can move on to configuring your Cursor IDE for MCP integration.

Using MCP in Cursor IDE

To integrate MCP with Cursor IDE, you have two options. The easiest is through the Settings UI. Use the Cmd/Ctrl + , shortcut, go to Tools & MCP, and click Add new MCP server. Enter the server's name, type, and command details.

For more flexibility, create a mcp.json file. Place this file in ~/.cursor/mcp.json for global tools or in your project root as .cursor/mcp.json for project-specific setups. Here's an example configuration for a PostgreSQL server:

{

"mcpServers": {

"postgres": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-postgres", "postgresql://localhost/mydb"],

"env": {

"PGPASSWORD": "${env:DB_PASSWORD}"

}

}

}

}

Unlike Claude Desktop, Cursor typically detects configuration changes without needing a restart. To confirm the connection, open Cursor's chat (Cmd/Ctrl + L) and ask, "What MCP tools do you have available?" The AI will list all connected tools and their capabilities.

Troubleshooting Tip: If you encounter a "command not found" error, use the absolute path to npx (e.g., /usr/local/bin/npx on macOS) instead of relying on your shell's PATH.

Note: While Cursor doesn't yet natively support MCP "Prompts" (reusable workflow templates), you can manually add prompt text in Cursor Settings → Rules to guide the AI on using specific tools.

Beyond Claude and Cursor

MCP also integrates with VS Code (via Copilot), Windsurf, JetBrains IDEs, and OpenAI's Agents SDK. As of early 2026, there are over 8,600 community-built MCP servers, with the Claude Directory tracking more than 60 servers optimized for developer workflows.

"MCP servers are the plugin system for Claude Code, and they're transforming how developers build software with AI."

- Claude Directory

For team-based or production setups, consider using Streamable HTTP for remote MCP servers. This transport method, which replaced the older Server-Sent Events (SSE) standard in March 2025, is now the preferred approach for reliable deployments.

MCP vs. Function Calling vs. REST APIs: When to Use What

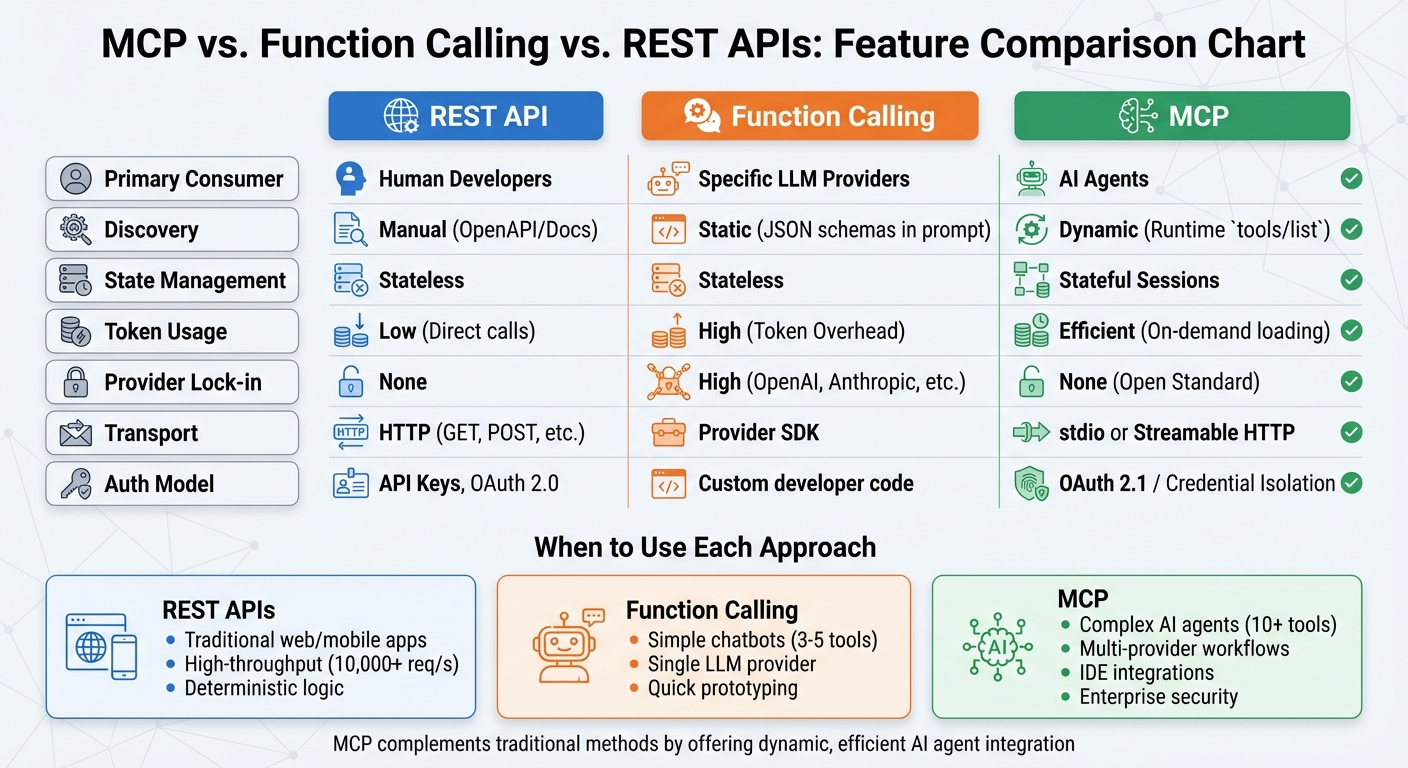

MCP vs Function Calling vs REST APIs: Feature Comparison Chart

Choosing the right interface for your project depends on your specific requirements and the type of system you're building. MCP, REST APIs, and function calling each serve different purposes and excel in distinct scenarios. MCP isn't designed to replace REST APIs or function calling but to address the needs of AI agents that dynamically discover and utilize tools at runtime. Meanwhile, REST APIs cater to human developers, and function calling works best for single-provider chatbots with a limited set of tools.

The key difference lies in how these approaches handle session state and discovery. REST APIs and function calling are stateless, requiring the full context to be passed with every interaction. Function calling, in particular, sends entire tool definitions with each request, which can lead to significant token overhead as the number of tools increases. MCP, on the other hand, maintains stateful sessions and uses a tools/list method for dynamic tool discovery, avoiding the need for hardcoded schemas and reducing overhead.

"REST APIs are designed for traditional software clients. MCP is designed for AI agents." - Nikhil Tiwari, Product Development Lead

MCP also introduces a new integration model. It establishes an N + M ecosystem, where any compatible client can connect to any server through a standardized interface. This means developers only need to write custom code once per tool, rather than for every possible client-server combination. By early 2026, this model has led to widespread adoption, with over 13,000 MCP servers on GitHub and 97 million monthly SDK downloads.

Comparison Table: MCP, Function Calling, and APIs

Here's a quick breakdown of the differences between these approaches:

| Feature | REST API | Function Calling | MCP |

|---|---|---|---|

| Primary Consumer | Human Developers | Specific LLM Providers | AI Agents |

| Discovery | Manual (OpenAPI/Docs) | Static (JSON schemas in prompt) | Dynamic (Runtime tools/list) |

| State Management | Stateless | Stateless | Stateful Sessions |

| Token Usage | Low (Direct calls) | High (Token Overhead) | Efficient (On-demand loading) |

| Provider Lock-in | None | High (OpenAI, Anthropic, etc.) | None (Open Standard) |

| Transport | HTTP (GET, POST, etc.) | Provider SDK | stdio or Streamable HTTP |

| Auth Model | API Keys, OAuth 2.0 | Custom developer code | OAuth 2.1 / Credential Isolation |

Choosing the Right Tool for the Job

- REST APIs: Ideal for traditional web or mobile applications, high-throughput data fetching (10,000+ requests per second), and deterministic logic written by human developers.

- Function Calling: Best for simple chatbots with a small number of tools (3–5) tied to a single LLM provider, or for quick prototyping.

- MCP: Suited for complex AI agents requiring 10+ tools, multi-provider workflows, IDE integrations (like Cursor or VS Code), or enterprise-grade security using OAuth 2.1.

MCP complements traditional methods by offering a more dynamic and efficient approach. For example, when wrapping a REST API with MCP, you can create outcome-focused tools that combine multiple API calls. This reduces token usage and improves performance by minimizing latency.

Security in MCP: Authorization, Sandboxing, and Trust

Security in the Model-Client Protocol (MCP) is essential because it directly connects AI models to tools and data. This makes robust protection a necessity. MCP's approach to security aligns with its goal of creating a trusted integration framework for AI tools.

MCP takes a "human-in-the-loop" approach to security. It requires users to give explicit consent before any tools are activated or data is accessed. As outlined in the specification:

"Users must explicitly consent to and understand all data access and operations".

This means that AI clients must present clear approval prompts before executing commands, accessing files, or querying databases.

Since June 2025, MCP servers have adopted the role of OAuth Resource Servers. They now use access tokens from Identity Providers like Auth0, Okta, or Keycloak to enforce precise permission scopes. For example, instead of granting broad permissions like crm.*, they use specific scopes such as crm.read. This minimizes the damage that could result from a compromised session. A July 2025 study of over 2,500 MCP plugins revealed many lacked proper privilege separation, emphasizing the importance of strict access controls. To further enhance security, the protocol supports sender-constrained tokens through mechanisms like mTLS or DPoP, which help prevent replay attacks.

User Approval and Permission Controls

Every tool execution in MCP requires the user's explicit consent. The host application must display the intended operation and wait for user approval before proceeding. This ensures that users remain in control of all interactions.

During the initial handshake, the client and server agree on supported security features, such as authentication methods and sampling controls. Clients can also define boundaries for server access, such as specific directories or URIs, using the "Roots" mechanism. While this provides coordination, it doesn't offer absolute security guarantees. Additionally, servers must seek user approval for any sampling requests they initiate. A new feature called Elicitation, introduced in November 2025, allows servers to request additional details from users during workflows.

Sandboxing and Isolation

Beyond user consent, MCP employs strict sandboxing and isolation measures to limit potential breaches. High-risk operations, like code execution or database queries, must run in isolated worker processes. For example, Node.js's fork() method is used to prevent unauthorized memory or secret access. When dealing with remote servers, containerization is critical. Running MCP servers in minimal Docker images (such as Alpine) under non-root users, with read-only filesystems and all Linux capabilities dropped (cap_drop: ALL), significantly reduces the impact of a compromise. Additionally, resource constraints, such as 128MB memory limits and 30-second execution timeouts, are enforced to prevent denial-of-service attacks caused by runaway processes.

Path sandboxing is another key measure. Tools that accept file paths must verify that resolved paths start within a designated SANDBOX_ROOT directory. Stripping environment variables from child processes ensures sensitive data, like API keys, remains secure. Input validation also plays a crucial role. Using tools like Zod to enforce strict schemas for numeric boundaries and string length limits treats all model-generated inputs as potentially untrusted.

Grizzly Peak Software emphasized this dual role of AI models:

"The AI model is both the user and the attack surface: it interprets untrusted natural language, decides which tools to call, constructs the arguments, and receives the results".

An example of the risks involved was a critical SQL injection flaw in Anthropic's SQLite-based MCP server. This vulnerability was copied over 5,000 times before the repository was archived in May 2025, highlighting the dangers of supply chain vulnerabilities. Using parameterized queries is essential to mitigate SQL injection risks from model-generated inputs.

| Security Layer | Best Practice | Implementation Example |

|---|---|---|

| Filesystem | Path Validation | if (!resolved.startsWith(SANDBOX_ROOT)) throw Error |

| Execution | Process Isolation | Use child_process.fork() with stripped env |

| Network | Outbound Control | Use allowlists for specific domains/IPs |

| Identity | Scoped Access | OAuth 2.1 with granular scopes (e.g., crm.read) |

| Resource | Limits | Set memory caps (128MB) and timeouts (30s) |

For transport-level security, considerations vary based on server type. Local servers using stdio rely on OS-level user permissions, while remote MCP servers using Streamable HTTP require TLS 1.3 and mandatory authentication to protect data from interception. A "fail closed" approach is critical - if token verification or cryptographic checks fail, access is denied by default.

The Future of MCP: A Universal Standard for AI Tools

MCP is on track to become the go-to connector for AI integrations, simplifying how tools and applications work together. Think of it like the USB for AI. Before USB came along, every device had its own unique cable and port. MCP does the same for AI - eliminating the need for countless custom connections. Instead, developers can create a single MCP server for a tool, and it’ll work seamlessly with any MCP-compatible AI host. This approach paves the way for a dynamic ecosystem and widespread adoption across industries.

As of early 2026, MCP’s growth has been staggering. The MCP SDK now sees over 97 million monthly downloads. The PulseMCP directory lists more than 8,600 available MCP servers, and GitHub features over 8,000 MCP-related repositories. Around 28% of Fortune 500 companies have already integrated MCP servers into their AI systems, and Gartner predicts that 75% of API gateway vendors will support MCP by the end of 2026.

What’s even more impressive is the speed of its adoption. MCP became an industry standard in just 14 months - a timeline described as unmatched for technical protocols. Major tech leaders like Anthropic, OpenAI, Google, Microsoft, and AWS have all embraced MCP. In December 2025, Anthropic handed MCP over to the Agentic AI Foundation under the Linux Foundation, ensuring it remains neutral and accessible to everyone.

Contributing to the MCP Ecosystem

With its rapid rise, MCP’s open-source community is already shaping its future. Developers have endless opportunities to contribute by creating MCP servers for their tools or sharing them on platforms like GitHub and PulseMCP [44,13]. The ecosystem already includes servers for databases, SaaS platforms, cloud services, and development tools, but there’s still room for integrations tailored to niche industries and specialized workflows.

The beauty of MCP lies in its simplicity: developers write the integration once, and it works with any MCP-compatible AI, whether that’s Claude, ChatGPT, Cursor, or something new down the road [44,13]. This concept, often referred to as "write once, run anywhere", is similar to how the Language Server Protocol revolutionized code editor integrations. As GitHub Developer Advocate Kedasha Kerr put it:

"MCP is the LSP (Language Server Protocol) of LLMs".

How MCP Reduces Vendor Lock-In

One of MCP’s standout features is its ability to reduce vendor lock-in, giving users the freedom to switch providers without reworking their entire AI stack. Its vendor-neutral design separates the AI model, user interface, and tools, making transitions seamless. For example, moving from one AI provider to another doesn’t require changes to your MCP servers [44,45]. This flexibility is made possible through standardized discovery - MCP servers automatically advertise their capabilities to hosts, removing the need for manual setup or hardcoding. Compare this to traditional function calling, where switching providers often means rewriting code and updating APIs.

Here’s how MCP stacks up against custom integrations:

| Feature | MCP | Custom Integration |

|---|---|---|

| Portability | Works across any MCP-compatible host | App-specific and model-specific |

| Discovery | Built-in; servers advertise capabilities | Manual; must be hard-coded |

| Vendor Lock-in | Low; easy to switch AI models | High; integrations are often proprietary |

| Maintenance | Build once, update once | High (multiple implementations) |

Yuval Avidani summed it up well:

"Anthropic's MCP is becoming the USB-C of AI integrations" and "MCP is the most important infrastructure development for AI agents since the transformer".

Conclusion

MCP is reshaping how developers link AI models with tools and data sources. Instead of creating custom integrations for every AI client and tool, developers can now build a single MCP server that works effortlessly with platforms like Claude, Cursor, and ChatGPT. This approach eliminates the complex N×M integration problem, which previously demanded tailored solutions for every combination.

The numbers speak volumes: 97 million monthly SDK downloads, over 13,000 GitHub servers, and adoption by 28% of Fortune 500 companies. Gartner projects that by the end of 2026, 75% of API gateway vendors will support MCP, further underscoring its growing presence in the industry.

MCP goes beyond simplifying integrations. It tackles critical security issues with features like human-in-the-loop approval, OAuth 2.1 compatibility, and scoped permissions. By decoupling tool logic from specific AI providers, MCP eliminates vendor lock-in, allowing developers to switch AI models without rewriting server code. These features create a reliable and secure foundation for AI integration.

The December 2025 donation of MCP to the Linux Foundation's Agentic AI Foundation ensures vendor neutrality and community-driven governance, reinforcing trust in its long-term development and adoption.

FAQs

Do I need MCP if I already have REST APIs?

If you're already working with REST APIs, deciding whether to use MCP depends on what you need from your AI integrations. REST APIs are great for static, application-specific data exchanges, but they require manual configuration for every integration. On the other hand, MCP supports dynamic, standardized, and stateful interactions between AI models and tools. For workflows that prioritize flexibility and scalability in AI, MCP can be a game-changer. However, for simpler setups, it might not be essential.

What’s the difference between MCP tools, resources, and prompts?

Tools refer to active features the AI can use to perform specific tasks, like running a database query or sending an email. Resources, on the other hand, are passive sources of information, such as files or web pages, that supply context but can't be directly executed. Prompts are reusable templates designed to guide the AI's use of tools and resources, making workflows more efficient. Combined, these elements shape how AI functions within the MCP ecosystem.

How do I securely deploy an MCP server in production?

To ensure a secure deployment of an MCP server in a production environment, start with a dependable transport method such as Streamable HTTP or stdio. Strengthen your setup by implementing robust authentication protocols like OAuth2 and enforcing strict permissions to control access.

Add layers of security by validating all incoming inputs, applying rate limiting to prevent abuse, and maintaining detailed audit logs for monitoring activities. Use sandboxing to isolate different environments, and tools like Docker for streamlined and secure deployment.

Safeguard your data through TLS encryption, set up firewalls to block unauthorized access, and stay on top of regular security updates. Since the MCP standard continues to evolve, conducting periodic security audits is essential to keep your deployment aligned with the latest best practices.

.png)