Practical guide to running SQLite in production: performance, limits, tuning (WAL/PRAGMA), replication tools and migration signs.

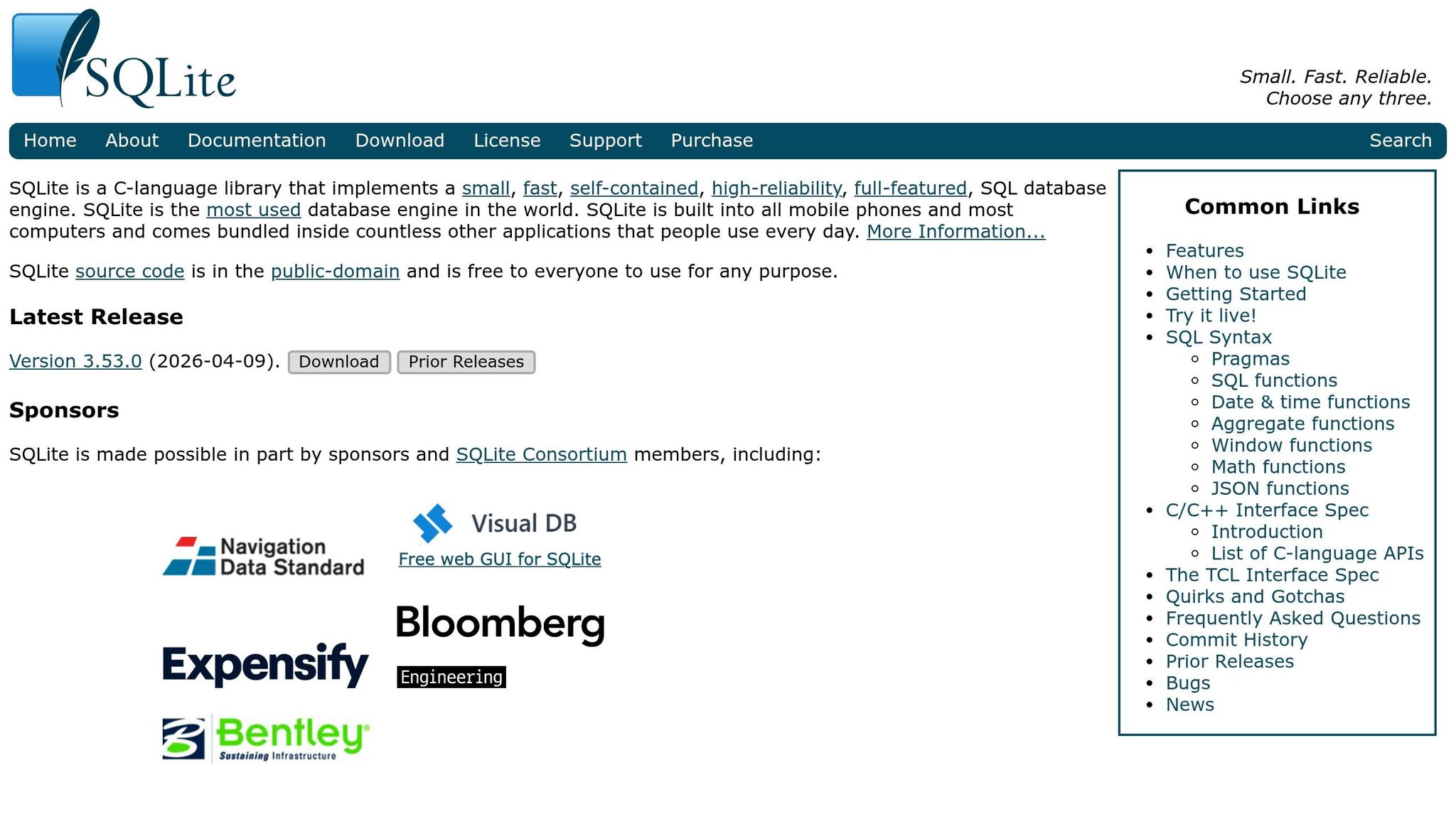

SQLite has evolved far beyond its reputation as a lightweight database for prototyping. In 2026, it powers production systems handling tens of thousands of daily visitors and billions of transactions with minimal complexity. Thanks to advancements in hardware like NVMe SSDs and tools like Litestream, Turso, and Cloudflare D1, SQLite is now a viable option for production workloads, especially for read-heavy applications, single-server setups, and edge computing.

Key Takeaways:

- Performance: SQLite offers sub-millisecond reads and can handle 10,000–50,000 writes per second on modern hardware.

- Simplicity: No server to manage; the database is a single file, making deployment easy.

- Use Cases: Ideal for read-heavy apps, local-first/offline apps, and database-per-tenant models.

- Limitations: Struggles with write-heavy workloads, multi-server setups, and datasets over 1 TB.

- Tools: Litestream for backups, Turso for multi-region replication, and PRAGMA settings for optimization.

SQLite excels in simplicity and speed for many scenarios but isn’t suitable for large-scale, write-intensive, or distributed systems. PostgreSQL remains a better choice for those cases. Use SQLite for its strengths and transition to PostgreSQL only when necessary.

Distributed SQLite with Litestream and LiteFS

sbb-itb-bfaad5b

What Makes SQLite Different from Traditional Databases

SQLite vs PostgreSQL: Performance, Architecture, and Cost Comparison

The big difference between SQLite and traditional databases like PostgreSQL lies in how they’re built. SQLite works as an embedded library that runs directly within your application, unlike PostgreSQL, which operates as a separate server process. When you query SQLite, it directly interacts with a local file, skipping the need for network communication or connection pooling. This streamlined setup is a major reason why SQLite has become so popular for production environments.

This architecture has a big impact on performance and operational simplicity. For example, local SQLite reads typically finish in about 0.01 ms. Compare that to PostgreSQL, where reads take 1–3 ms in the same region, or 3–10 ms in managed environments. That’s a performance boost of 100 to 1,000 times for reads, simply because the data stays on the same machine.

"SQLite eliminates an entire category of infrastructure complexity. There is no database server to manage, no connection pooling to configure, and no network latency." – Pavan Rangani

This embedded design is what makes SQLite’s features and capabilities stand out.

Core Features of SQLite

One of SQLite’s standout traits is that it’s zero-configuration. There’s no server to install, no user credentials to set up, and no connection strings to manage. The entire database sits in a single file, making deployment as simple as copying that file to your server (using something like scp). This portability is a huge advantage - you can share a database via email, commit it to version control for smaller datasets, or even bundle it with your application.

SQLite is also incredibly lightweight. On a server with NVMe storage and Write-Ahead Logging (WAL) enabled, it can handle over 100,000 reads and more than 10,000 writes per second. This level of performance is more than enough for most web applications, and it comes with zero additional infrastructure costs.

However, there’s a trade-off: concurrency. SQLite uses file-level locking, which means only one writer can update the database at a time, even in WAL mode. PostgreSQL, on the other hand, uses Multi-Version Concurrency Control (MVCC), allowing many writers to work simultaneously. This makes SQLite a great choice for applications with heavy read operations but less ideal for write-intensive workloads across multiple processes.

SQLite vs PostgreSQL: Side-by-Side Comparison

Here’s a quick look at how SQLite and PostgreSQL stack up against each other:

| Feature | SQLite (Embedded) | PostgreSQL (Client‑Server) |

|---|---|---|

| Architecture | Embedded (single file) | Separate server process |

| Ops Complexity | None (no server to manage) | Moderate (server management required) |

| Read Latency | ~0.01 ms (local) | ~1–3 ms (same region) |

| Write Concurrency | Single writer (WAL improves) | Full MVCC (many concurrent writers) |

| Scaling | Vertical (single node) | Horizontal (replication/clustering) |

| Practical Limit | ~1 TB in production | Multi‑TB (petabyte‑scale possible) |

| Cost | $0 (included with server) | $50+ monthly for managed instances |

While SQLite can technically handle databases up to 281 TB, its practical production limit is closer to 1 TB. PostgreSQL, by contrast, is much better suited for multi-terabyte workloads. It offers built-in replication, horizontal scaling, and the ability to handle high write concurrency - features that SQLite has only recently begun to address with newer tools for replication and multi-region access.

Production Success Stories with SQLite

SQLite is proving its strength by powering high-traffic production applications, challenging the idea that only enterprise databases can handle heavy workloads.

Pieter Levels' Apps and Digital Nomad Tools

Independent developers have shown that SQLite can manage substantial traffic with minimal setup. For example, the trading comparison site PropFirm achieved 50,000+ daily visitors within three months using just a single SQLite .db file stored on disk. This setup required no external database processes, highlighting SQLite's zero-configuration design. It’s a perfect example of how solo developers can operate high-traffic apps without the complexity of traditional infrastructure.

Rails 8 Adopts SQLite as the Default Database

In 2024, Rails 8 made SQLite its default database, marking a significant shift in web development. The framework now integrates the Litestack gem to manage ActiveJob, ActionCable, and ActionCaching. This allows a full Rails application - complete with database, caching, and background jobs - to run on a single server without juggling multiple processes.

"No part is the best part, right? ... Redis and Postgres run as separate processes that need to be monitored." - Brad Gessler, Author, Fly.io

This change dramatically improves performance. Embedding SQLite reduces per-query latency to 10–20 microseconds, which is 50–100 times faster than intra-region PostgreSQL queries. With NVMe SSDs providing high write speeds, this setup meets the needs of over 90% of web applications, especially those with read-heavy workloads.

How Expensify and Fly.io Leverage SQLite

Companies like Expensify and Fly.io are pushing SQLite even further in high-transaction environments. Expensify processes billions of transactions using SQLite in production. Its embedded architecture eliminates the network latency typically found between application and database layers. Similarly, Fly.io promotes "single-process applications" that combine the server and database into one tightly integrated system.

"When you put your data right next to your application, you can see per-query latency drop to 10-20 microseconds. That's micro, with a μ. A 50-100x improvement over an intra-region Postgres query." - Ben Johnson, Engineer, Fly.io

To overcome SQLite's single-writer limitation, both companies use tools like Litestream and LiteFS. Litestream offers continuous replication to S3-compatible storage for disaster recovery. LiteFS, on the other hand, uses a FUSE-based filesystem to replicate Write-Ahead Log (WAL) segments from a primary write node to multiple read replicas across regions. This setup ensures low-latency reads globally. With NVMe storage, a single server running SQLite in WAL mode can handle over 100,000 read queries per second, and read replicas amplify this capacity even further.

When SQLite Works in Production (and When It Doesn't)

After hearing about its success stories, let’s get specific about where SQLite shines in production and where its limitations can cause problems. Matching your workload to SQLite’s design is crucial: take full advantage of its strengths while staying aware of its boundaries.

Where SQLite Excels

SQLite performs exceptionally well in read-heavy applications, especially when the majority of operations are SELECT queries. For instance, in March 2026, PropFirm Key reported handling over 50,000 daily visitors with a single 47 MB SQLite database. With a 98/2 read-write ratio, the database delivered sub-millisecond query times using WAL mode and memory mapping.

It’s also perfect for single-server deployments. When both the application and database are on the same machine, network latency is eliminated, and queries are lightning fast.

"The fastest database query is one that doesn't cross a network boundary. Embedded replicas and edge databases embody this principle." – Pockit Blog

SQLite is a natural fit for edge computing and IoT applications due to its small size and zero-configuration setup. Similarly, it’s widely used for local-first and offline applications, where syncing happens once connectivity is restored.

Another use case is the database-per-tenant model. Instead of managing one large database, each customer has their own SQLite file, making it easier to isolate data and simplify GDPR compliance.

On modern NVMe SSDs, SQLite can handle between 10,000 and 50,000 writes per second. For reads, a single server equipped with NVMe storage can process over 100,000 queries per second when using WAL mode.

While these strengths make SQLite a great choice for many scenarios, it’s not without its limitations. Understanding these is key to avoiding potential pitfalls in more demanding environments.

When SQLite Falls Short

The single-writer constraint is SQLite’s biggest drawback. Even in WAL mode, only one write transaction can be committed at a time. This creates bottlenecks in write-heavy applications like real-time analytics or high-frequency trading, which require thousands of simultaneous writes per second.

Distributed setups also highlight SQLite's limits. In multi-server environments, SQLite struggles because it needs local filesystem access. It doesn’t work well with network filesystems like NFS or SMB due to locking issues. For example, in February 2026, ultrathink.art, an e-commerce site, suffered data loss during a period of frequent deployments. Overlapping containers writing to the same WAL file caused two orders to be lost, even though the Stripe payments succeeded.

SQLite also struggles with large datasets. While its theoretical maximum size is 281 TB, performance drops significantly once databases exceed 1 TB, especially for write-heavy operations. For workloads requiring horizontal scaling across servers, PostgreSQL is the better option.

| Feature | SQLite (WAL Mode) | PostgreSQL |

|---|---|---|

| Write Concurrency | 1 writer at a time | Unlimited (MVCC) |

| Read Performance | Excellent (in-process) | Very good (network overhead) |

| Scaling | Vertical only | Horizontal (read/write replicas) |

| Max Practical Size | ~1 TB | Unlimited |

| Operational Cost | $0 (embedded) | $50–$500+/mo (managed) |

"SQLite is an excellent choice when your application runs on a single server... your workload is read-heavy (90%+ reads)... [and] your data fits comfortably in 1 TB or less." – KX

For large-scale SaaS applications with distributed architectures, heavy write demands, or multi-region setups, PostgreSQL is the better choice. The challenge is knowing when SQLite’s simplicity and speed outweigh the need for scalability - and when they don’t.

Tools and Techniques for Production SQLite

Once you've decided to use SQLite, it's crucial to prepare it for production by applying specific tools and configurations. These steps enhance SQLite's performance and reliability, making it a solid choice for scaling applications.

Litestream: Continuous Backup and Replication

Litestream is a tool designed to stream Write-Ahead Log (WAL) changes to S3-compatible storage. Instead of relying on periodic backups, it replicates each WAL frame as it's written, keeping replication lag under one second. If a crash occurs, the restore process involves downloading the latest snapshot and replaying WAL segments. Even for databases several gigabytes in size, this process typically takes less than 30 seconds. You can also restore to a specific point in time using a timestamp flag, which is particularly helpful for recovering from accidental data deletions.

"This restore-and-boot pattern means your entire disaster recovery plan fits in a Dockerfile." – Krystian Wiewiór, MVP Factory

The performance impact is minimal, adding less than 1 millisecond of latency per transaction. Storage costs are also low - around $0.50 per month for a 1GB database stored on S3. To maximize performance with Litestream, set PRAGMA synchronous = NORMAL instead of FULL. This adjustment improves speed while maintaining durability.

While Litestream handles backup and recovery, tools like Turso and libSQL take SQLite further by enabling multi-region deployments.

Turso and libSQL: Multi-Region SQLite

Turso is a managed platform built on libSQL, an open-source fork of SQLite. It introduces features like native replication, server mode, and better write throughput. Turso supports embedded replicas that automatically sync a local SQLite file with a remote primary across more than 30 regions. This setup allows for incredibly fast local reads - averaging around 625 microseconds - making it 100 to 1,000 times faster than traditional managed PostgreSQL instances, which typically range from 3ms to 10ms.

Although write latency is higher (15ms to 50ms) due to network round trips to the primary, this tradeoff is acceptable for applications that are read-heavy. Turso's database-per-tenant model is another advantage, simplifying compliance by isolating customer data without requiring tenant_id columns. The free tier offers up to 100 active databases per month and 5GB of total storage.

For demanding production environments, fine-tuning SQLite's settings is essential.

Performance Tuning: WAL Mode and PRAGMA Settings

Optimizing SQLite's configuration can significantly enhance its performance in production. Start by enabling Write-Ahead Logging with:

PRAGMA journal_mode = WAL;

Then, adjust the following settings:

PRAGMA synchronous = NORMAL;: This improves write performance by up to three times compared toFULL.PRAGMA busy_timeout = 5000;: Prevents "database is locked" errors by extending the timeout period (adjustable up to 60,000ms).PRAGMA cache_size = -64000;: Allocates approximately 64MB of cache, which is ideal for read-heavy operations.PRAGMA mmap_size = 268435456;: Enables memory-mapped I/O, allowing the operating system to handle disk I/O more efficiently (set to 256MB in this example).

In April 2026, Forward Email achieved 10,548 inserts per second and 17,494 selects per second on Node.js v20 by combining these settings with PRAGMA temp_store = 1 (disk), which helps prevent memory exhaustion during large operations.

Here's a quick summary of the recommended settings:

| PRAGMA | Recommended Value | Performance Impact |

|---|---|---|

journal_mode |

WAL | Boosts concurrent performance |

synchronous |

NORMAL | Up to 3× faster writes than FULL |

busy_timeout |

5000 to 30000 | Reduces connection failures |

cache_size |

-64000 (64MB) | Improves read-heavy workloads |

mmap_size |

268435456 (256MB) | Speeds up reads for large databases |

wal_autocheckpoint |

1000 | Enhances performance |

Since SQLite allows only one writer at a time, you should serialize writes using an application-level mutex or a dedicated write queue. Additionally, running PRAGMA optimize; daily ensures the query planner remains efficient, providing consistent performance improvements.

Moving from SQLite to PostgreSQL

SQLite can handle many production workloads effectively, but there comes a time when PostgreSQL becomes the better option. Recognizing when to switch - and planning the transition carefully - can help you avoid a rushed migration later.

Recognizing SQLite's Limits

One of the clearest signs it's time to move on is when SQLite's single-writer model starts to slow things down. While modern NVMe SSDs allow SQLite to handle between 10,000 and 50,000 writes per second, applications needing higher sustained write throughput will benefit from PostgreSQL, which supports unlimited concurrent writers.

Scaling horizontally is another factor. SQLite operates as an embedded, single-node database with direct file-system access. If your application requires scaling writes across multiple servers or needs network-based access for various services, PostgreSQL's client-server architecture becomes essential.

Dataset size is also a key consideration. When your data surpasses 1 TB or no longer fits comfortably in RAM, causing frequent disk access, it's a good time to consider PostgreSQL. Additionally, advanced features like Row-Level Security (RLS), built-in audit logging, PostGIS for geographic data, or pub/sub patterns using LISTEN/NOTIFY may necessitate the switch.

"If I ever need to migrate to Postgres, I'll count that as a success." - Shivek Khurana

When these limitations become apparent, following a structured migration plan can make the transition much smoother.

Migration Steps and Best Practices

A successful migration starts with a thorough review of your schema. For instance, SQLite's INTEGER PRIMARY KEY AUTOINCREMENT should be mapped to PostgreSQL's SERIAL or BIGSERIAL. Similarly, fields stored as TEXT in SQLite - such as UUIDs, JSON, or timestamps - can be converted to PostgreSQL's native types like UUID, JSONB, and TIMESTAMP WITH TIME ZONE.

If you're using an Object-Relational Mapper (ORM) like Prisma or Drizzle, the migration might only require updating your configuration to point to PostgreSQL instead of SQLite. For data migration, export your SQLite data in .inserts mode, convert SQLite's 1/0 booleans to PostgreSQL's TRUE/FALSE, and replace SQLite-specific features like FTS5 with PostgreSQL's native full-text search.

During the transition, run both databases in parallel to verify data integrity. Update your application to use a PostgreSQL-compatible database driver - switching from something like better-sqlite3 to node-postgres. To reduce network latency, ensure your PostgreSQL instance is in the same region as your application. Additionally, use connection pooling tools like PgBouncer to efficiently manage high connection counts.

Finally, perform rigorous integrity checks on both databases before making the final switch. This ensures a smooth cutover with minimal disruption.

Conclusion

SQLite stands out as a solid option for production workloads. Its embedded design is particularly well-suited for read-heavy applications, single-server setups, and edge computing environments, offering sub-millisecond read times while keeping infrastructure simple.

"SQLite was built in 2004 and is the most backward compatible software ever... Running SQLite in production only requires tuning to modern standards." - Stephen Margheim

The tools available today - like Litestream for continuous backups, Turso for multi-region distribution, and Rails 8's Solid Trifecta - have effectively addressed many of SQLite's earlier limitations. Coupled with modern NVMe hardware, SQLite can now handle between 10,000 and 50,000 writes per second, proving its capability for a variety of use cases.

While SQLite excels in simplicity and performance, PostgreSQL remains the better choice when horizontal scaling across multiple servers is necessary, when write throughput consistently exceeds 10,000 writes per second, or when advanced features such as Row-Level Security and PostGIS are required. The decision ultimately depends on the specific needs of your application - not on whether a database carries an "enterprise" reputation.

Start with SQLite if operational simplicity and high performance meet your needs. Transition to PostgreSQL only when you've outgrown SQLite's single-writer model or need the advanced features it lacks. For many applications, you may never need to make that switch.

FAQs

How do I know if SQLite will bottleneck my app’s writes?

SQLite’s design restricts write operations to one writer at a time, even when using WAL (Write-Ahead Logging) mode. While this setup can support thousands of writes per second in well-optimized environments, it may struggle under high write contention or when multiple processes attempt simultaneous writes. This can lead to delays or an uptick in SQLITE_BUSY errors and noticeable write latency. If your application demands frequent concurrent write operations, it might be worth exploring a more scalable database solution, such as PostgreSQL.

What’s the safest way to run SQLite in containers without corruption?

To ensure SQLite runs smoothly in containers and to prevent data corruption, consider these steps:

- Enable Write-Ahead Logging (WAL): Use

PRAGMA journal_mode = WALto improve durability and allow better concurrency for read and write operations. - Set a Busy Timeout: Configure a timeout, such as

PRAGMA busy_timeout = 5000, to manage write contention effectively. - Leverage Litestream: Use Litestream for continuous replication to cloud storage. This provides real-time backups and ensures recovery options are readily available.

- Manage Filesystem Permissions: Confirm that the container has appropriate filesystem permissions to avoid unexpected issues.

- Avoid Multiple Writers: SQLite performs best with a single writer. Ensure your setup avoids scenarios with multiple writers to the database.

By following these practices, you can maintain a more reliable and efficient SQLite setup in containerized environments.

How should I back up and restore SQLite for disaster recovery?

To ensure consistent backups and easy recovery for SQLite databases, Litestream is a great tool. It provides continuous replication to S3-compatible storage, keeping your data safe and accessible.

Here’s how it works: Litestream monitors your database and streams changes in real-time to your chosen storage. If you need to recover your database, simply download the latest backup from the storage and replace the existing database file. Additionally, Litestream allows replaying snapshots to reduce the risk of data loss.

This setup is particularly effective for maintaining reliable backups and enabling quick recovery in production environments.

.png)