Explains Zig 0.16's std.Io using io_uring and fibers to swap threaded or evented I/O without async/await.

Zig 0.16 introduces a new approach to asynchronous I/O by using std.Io, an interface that simplifies switching between threaded and event-driven backends like io_uring (Linux) or Grand Central Dispatch (macOS). Unlike other languages, Zig avoids async/await propagation by leveraging userspace stack switching (fibers), allowing developers to write reusable, synchronous-looking code that works in both environments.

Key Takeaways:

- Unified Interface:

std.Iolets you write logic once and choose between threaded or event-driven I/O without changing the code. - Evented Backends: Uses io_uring for Linux and Grand Central Dispatch for macOS, reducing syscall overhead and improving performance.

- No Function Coloring: Zig eliminates the need for marking functions as

async, avoiding ripple effects through the codebase. - Experimental Status: While promising, event-driven backends are still under development and face performance challenges.

This design offers flexibility for handling high-performance, asynchronous tasks while keeping the code clean and reusable.

Zig's New Async I/O - Andrew & Zig Core Team

sbb-itb-bfaad5b

How Zig's std.Io Abstraction Works

Zig std.Io Backend Comparison: Threaded vs Evented I/O Performance and Architecture

Zig 0.16 introduces std.Io, a flexible I/O abstraction that uses dependency injection, similar to the Allocator interface. This design allows you to write your core application logic once and easily switch between different I/O backends without touching the main code. It’s a practical way to experiment with various backends while keeping your application logic consistent.

Swapping Backends Without Changing Code

At the heart of this design is inversion of control, where a std.Io interface abstracts the actual backend implementation. Zig provides two primary backend options:

std.Io.Threaded: A traditional thread pool model using blocking syscalls.std.Io.Evented: A modern approach usingio_uringon Linux or Grand Central Dispatch on macOS.

Andrew Kelley, the Lead Developer at the Zig Software Foundation, describes the process clearly:

"Setting up a std.Io implementation is a lot like setting up an allocator. You typically do it once, in main(), and then pass the instance throughout the application."

This means you configure the backend in your main function, call its .io() method to get the interface, and pass it to your application code. Your business logic remains untouched, regardless of the backend. This separation of concerns simplifies concurrent programming by isolating I/O implementation details from the rest of the application.

Code Example: Running on Threaded and Evented Backends

Here’s a practical example that showcases how the same application logic can run on different backends. In February 2026, Andrew Kelley demonstrated this with a simple "Hello, World!" program. The core logic in the app function remained exactly the same:

fn app(io: std.Io) !void {

try std.Io.File.stdout().writeStreamingAll(io, "Hello, World!");

}

For the threaded backend, the initialization process looks like this:

var threaded: std.Io.Threaded = .init(gpa, .{

.argv0 = .init(init.args),

.environ = init.environ,

});

defer threaded.deinit();

const io = threaded.io();

return app(io);

Switching to the evented backend involves only minor adjustments:

var evented: std.Io.Evented = undefined;

try evented.init(gpa, .{

.argv0 = .init(init.args),

.environ = init.environ,

.backing_allocator_needs_mutex = false,

});

defer evented.deinit();

const io = evented.io();

return app(io);

When you analyze these implementations with strace, the threaded version relies on standard writev syscalls, while the evented version uses io_uring_setup and io_uring_enter on Linux. The beauty of this abstraction is that the application code doesn’t need to account for these differences - std.Io handles it all behind the scenes.

| Feature | std.Io.Threaded | std.Io.Evented |

|---|---|---|

| Mechanism | Thread Pool / Blocking Syscalls | io_uring (Linux) / GCD (macOS) |

| Concurrency Model | OS Threads | Userspace Stack Switching (Fibers) |

| Best Use Case | General purpose, CPU-bound | High-performance, I/O-bound |

| Status (0.16) | Stable | Experimental |

How io_uring Powers Async I/O on Linux

What is io_uring?

io_uring is a completion-based I/O interface introduced in the Linux kernel that fundamentally changes how applications handle I/O operations. Unlike older methods like epoll or select, which focus on signaling readiness, io_uring is all about performing the I/O operation itself and notifying when it's done.

The magic lies in its architecture: two ring buffers - the Submission Queue (SQ) for sending requests and the Completion Queue (CQ) for receiving results. These buffers are shared between your application and the kernel, cutting down on the usual back-and-forth data copying between userspace and kernel space.

One of its standout features is reducing the number of syscalls needed. Traditional I/O often requires multiple syscalls - one to check readiness and another to perform the operation. With io_uring, a single io_uring_enter call can handle both submitting new requests and retrieving completed ones in one go. For example, a Zig application using coroutines executed just 33 syscalls with io_uring, compared to 677 when falling back to a poll-based system. This efficiency aligns perfectly with Zig's forward-thinking async I/O model.

How Zig Uses io_uring

In February 2026, Andrew Kelley gave a live demonstration showcasing how Zig integrates io_uring. The process began with io_uring_setup, followed by an asynchronous write operation executed via io_uring_enter.

By default, Zig configures 64 submission queue entries and 128 completion queue entries, optimizing for performance. The implementation takes advantage of advanced kernel features like IORING_SETUP_COOP_TASKRUN, IORING_SETUP_SINGLE_ISSUER, and IORING_FEAT_NODROP. Combined with userspace stack switching (fibers), this setup allows Zig to support asynchronous operations while maintaining code that looks and feels synchronous, avoiding the "function coloring" limitations seen in other languages.

Syscall Comparison: Threaded vs Evented I/O

Here's a side-by-side look at how syscalls differ between traditional threaded I/O and io_uring-powered evented I/O:

| Feature | Threaded I/O | Evented I/O (io_uring) |

|---|---|---|

| Primary I/O Call | writev(1, [{iov_base="...", iov_len=14}], 1) |

io_uring_enter(3, 1, 1, IORING_ENTER_GETEVENTS, ...) |

| Setup Calls | rt_sigaction, prlimit64 |

io_uring_setup, mmap (for ring buffers) |

| Blocking Behavior | Blocks the thread until I/O completes | Asynchronous; thread can handle other tasks |

| Overhead | One syscall per I/O operation | Multiple operations batched into one call |

In benchmarks, Zig's use of io_uring shows impressive results. For instance, a TCP echo server using io_uring handled 279,114 requests per second, compared to 221,851 requests per second with epoll - a 26% improvement. Additionally, p99 latency dropped from 3.13ms to 2.17ms. For file I/O, Zig with io_uring and 128 entries achieved 1.7 GB/s throughput using a 4KiB buffer, outperforming the 1.5 GB/s seen with standard blocking writes.

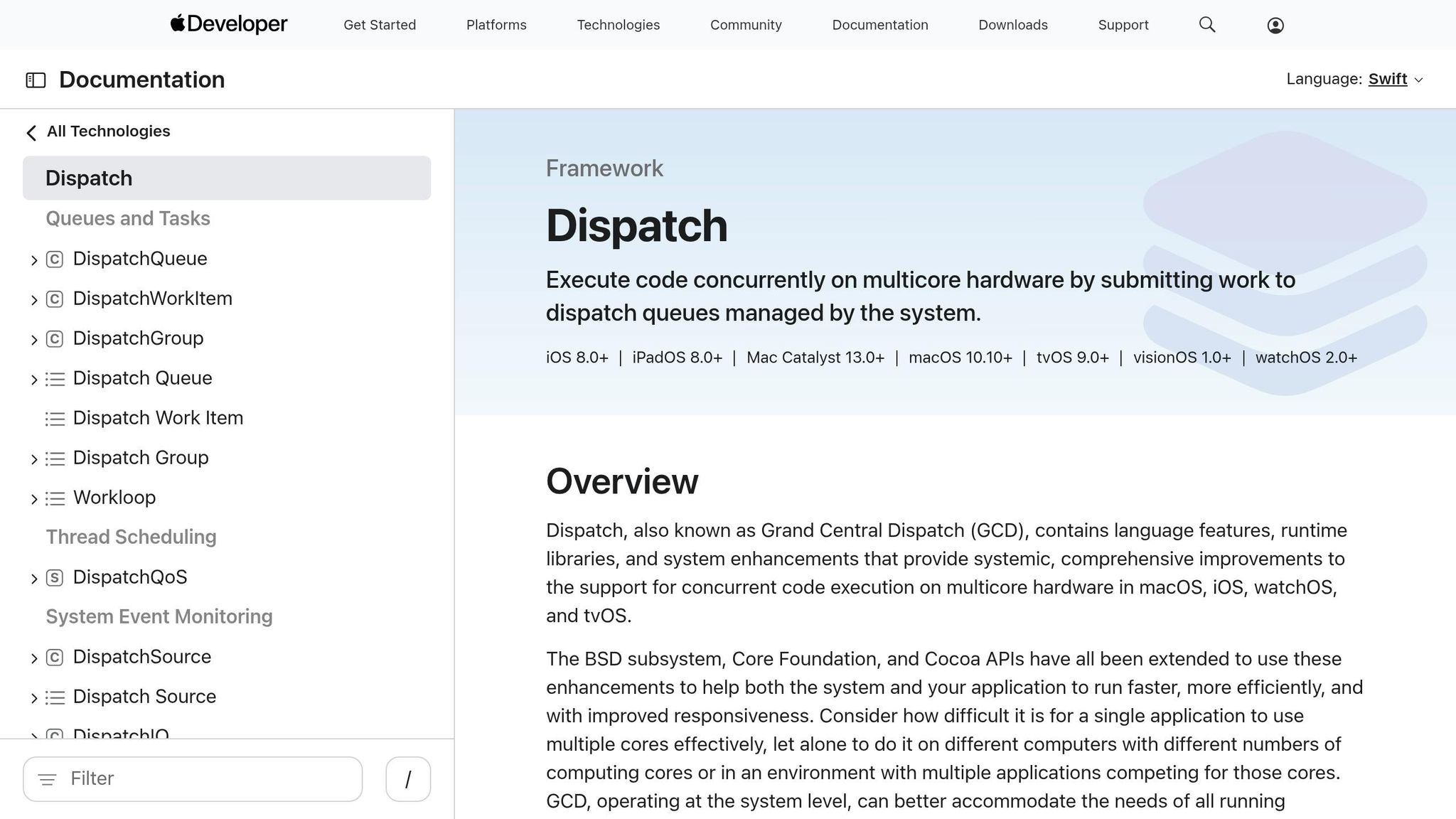

Grand Central Dispatch for Async I/O on macOS

How Grand Central Dispatch Works

Grand Central Dispatch (GCD), also called libdispatch, is Apple's library for handling concurrent tasks on macOS, iOS, and other Apple platforms. Unlike io_uring, which relies on completion-based ring buffers, GCD operates using a queue-based model. This model automatically handles the creation and termination of threads, streamlining task management.

One of GCD's standout features is its seamless integration with Darwin system APIs. It leverages the pthread_workqueue API to dynamically manage threads based on the workload, making it more efficient than traditional userspace thread pools. This tight integration is crucial for macOS-specific functionalities like recursive directory monitoring and Network.framework, both of which depend heavily on libdispatch.

"The most important feature of libdispatch... is its ability to dynamically spawn and retire pthreads through the lower-level pthread_workqueue API, which is integrated throughout Darwin system APIs." - lin72h, Zig Contributor

How Zig Integrates Grand Central Dispatch

On February 13, 2026, Zig incorporated GCD into its implementation for std.Io.Evented. This update involved adding 6,188 lines of code. Zig uses GCD for event notifications but handles its own fibers (userspace stack switching). This design choice addresses a critical issue: without fiber management, libdispatch would create a new thread for each asynchronous task, undermining the performance benefits of the evented model.

"Otherwise libdispatch would create one thread per async, and you would just end up with a threaded impl with no thread limit and no interesting evented properties." - jacobly, Zig Contributor

Zig's implementation allows the same app function to run on either std.Io.Threaded or std.Io.Evented (GCD) by simply changing the initialization settings - no changes to the code itself are needed. While this feature is still experimental, it is already capable of running the Zig compiler. However, some performance issues remain under investigation.

Next, we’ll look at how userspace stack switching enhances these approaches.

Userspace Stack Switching Explained

What is Userspace Stack Switching?

Userspace stack switching plays a key role in powering Zig 0.16's std.Io.Evented backend. You might hear it called fibers, stackful coroutines, or green threads - different terms for the same concept. Unlike OS threads managed by the kernel, this method lets applications directly manage execution contexts within their own process.

"Both of these [io_uring and GCD] are based on userspace stack switching, sometimes called 'fibers', 'stackful coroutines', or 'green threads'."

– Andrew Kelley, Lead Developer, Zig Software Foundation

One of the standout benefits of this approach is how it handles the issue of function coloring. In languages like Rust or JavaScript, marking a function as async forces every function that calls it to also be marked async, creating a ripple effect through the codebase. Zig's userspace stack switching avoids this entirely. The same code can seamlessly run on both std.Io.Threaded and std.Io.Evented backends without any changes. This not only simplifies the code but also opens up opportunities for improved performance.

Performance and Memory Trade-offs

Userspace stack switching shines when it comes to handling a large number of concurrent tasks. Instead of tying each task to its own OS thread - which would quickly hit kernel limits and lead to hefty scheduling overhead - Zig uses lightweight fibers managed in userspace. Pairing this with tools like io_uring on Linux or Grand Central Dispatch on macOS enables the efficient management of millions of connections.

But there's a catch: memory usage. Each fiber reserves up to 60 MiB of virtual stack space. Thanks to the OS’s overcommit mechanism, though, only a few pages of physical memory are allocated as needed. Using mmap with MAP_PRIVATE, the kernel only commits physical RAM (usually in 4 KB chunks) when a page is actually written to. So, while a fiber might technically reserve 60 MiB, it often uses just a few kilobytes of real memory.

This approach does have its limits, particularly on systems where memory overcommit is disabled. To address this, the Zig team is working on a builtin function to calculate the maximum required stack size during compile time, enabling more accurate allocation. As of February 2026, they’re also investigating a performance issue where the Zig compiler runs slower in evented mode compared to threaded mode. Up next, we’ll explore these limitations and the ongoing efforts to improve performance.

Current Limitations and Experimental Status

Known Issues and Missing Features

Ensuring consistent behavior across threaded and evented backends has proven difficult due to unresolved challenges. Zig's std.Io.Evented backends, specifically for io_uring and Grand Central Dispatch, are still in an experimental phase. Andrew Kelley, the founder of the Zig Software Foundation, clarified this in a devlog:

"They should be considered experimental because there is important followup work to be done before they can be used reliably and robustly: better error handling, remove the logging, diagnose the unexpected performance degradation... a couple functions still unimplemented."

Several gaps still need to be addressed. Error handling remains incomplete, some functions in the std.Io interface are yet to be implemented, and internal logging used during development must be removed before these backends can be deemed production-ready. Additionally, current test coverage is insufficient.

Resource leaks may occur when chaining try with await. To prevent this, you should immediately defer task cancellation using defer task.cancel(io) catch {};. As Kelley explained:

"The problem is that when the first try activates, it skips the second await which is then caught by the leak checker... cancel is your best friend, because it's going to prevent you from leaking the resource."

Another issue arises with producer–consumer patterns. Using io.async does not ensure concurrent execution, and on single-threaded systems, this can result in deadlocks. For true simultaneous execution, you must explicitly use io.concurrent. However, be prepared to handle error.ConcurrencyUnavailable if the system doesn't support concurrency .

Performance Issues Under Investigation

The Zig team is currently investigating an unexplained performance drop when running the Zig compiler with IoMode.evented. This issue, which specifically affects the io_uring path, remains unresolved as of early 2026 . Until the root cause is identified, these evented backends are more suitable for testing purposes than for production use.

Another concern is fiber stack allocation. Each fiber reserves up to 60 MiB via overcommit, which can cause problems on systems where overcommit is disabled.

Resolving these issues is key to improving the platform's reliability and performance.

What's Coming Next

One planned enhancement is a new builtin function to determine the maximum stack size at compile time, allowing for more accurate and efficient fiber memory allocation . Kelley emphasized the importance of this improvement:

"It's important to solve the problem of stack upper bound. The stackful coroutines need to be allocated with exactly the right amount of memory - not too much, not too little."

As of early March 2026, the Zig 0.16.0 milestone was 92% complete. Once the performance issues are resolved and error handling is improved, these backends will be closer to production readiness. For now, they remain experimental and are available on master builds. For critical workloads, it’s recommended to stick with std.Io.Threaded.

How Zig's Async I/O Compares to Other Languages

Zig vs Rust: Swappable Backends vs Async/Await

Zig 0.16 takes a different approach to asynchronous programming by avoiding the widespread use of async/await syntax. Instead, it allows the Io implementation to be passed as a parameter, much like its Allocator interface. This means you can write a function once, and the caller decides whether it operates on a threaded, blocking, or event-driven backend - all without altering the function's signature.

Rust, on the other hand, uses an async/await model where functions must be explicitly marked as async. This requirement leads to async propagation throughout the codebase. Zig's approach is more flexible - its functions can work with different backends, letting developers switch between std.Io.Threaded and std.Io.Evented at the application level without needing to tweak library code.

The underlying technical difference is also noteworthy. Rust relies on compiler-generated state machines for its async operations, while Zig uses stackful coroutines (fibers) that perform userspace stack switching. Rust's model is efficient but mandates the use of async/await, while Zig's stackful coroutines allow functions to suspend and resume naturally, without special syntax.

Of course, this flexibility comes with trade-offs. Zig's experimental evented mode currently uses memory overcommit, allocating up to 60 MiB for stacks. In contrast, Rust's state machines have fixed, compiler-determined memory footprints. Zig is working on a builtin function to calculate maximum stack size at compile time, which would make fiber allocation more precise.

When compared to Go, Zig's approach highlights another layer of control over concurrency.

Zig vs Go: Userspace Stack Switching vs Goroutines

Zig's concurrency model is often compared to Go's, as both use stackful models. However, they differ significantly in how they manage control and scheduling. Go abstracts away the scheduler entirely - when you use the go keyword, the runtime handles everything behind the scenes. Zig, on the other hand, provides explicit control, letting you choose whether your code uses blocking I/O, thread pools, or event-driven backends like io_uring.

Zig also draws a clear line between asynchrony and concurrency. Using io.async() allows work to proceed out of order but may still run on the current thread. For true parallelism, you use io.asyncConcurrent(), which can fail with error.ConcurrencyUnavailable if the backend doesn't support parallel execution. In contrast, Go's go keyword always assumes concurrent execution.

"The problem is that we needed concurrency, but we asked for asynchrony."

– Andrew Kelley, Lead Developer, Zig Software Foundation

This explicit control helps avoid issues like deadlocks in single-threaded environments, where code might incorrectly assume parallel execution. While Go's runtime abstraction is well-suited for general-purpose applications, Zig's model excels in environments where runtime overhead must be minimized - such as embedded systems or performance-critical scenarios. For example, Zig allows you to generate machine code comparable to C when using std.Io.Threaded, while enabling evented I/O with fibers only when necessary.

Getting Started with std.Io.Evented

Installing Zig Master Builds

To work with std.Io.Evented, you'll need a master branch build of Zig, as the experimental features aren't included in the stable 0.15.x releases. Visit the official Zig Programming Language download page and locate the Master section. From there, download the binary that matches your operating system - either Linux or macOS.

After downloading, extract the file and add the zig binary to your system's PATH. Once that's set up, you're ready to dive into creating your first evented I/O program.

Running Your First Evented I/O Program

This example highlights the flexibility of the backend abstraction offered by std.Io.Evented. Start by initializing std.Io.Evented with an allocator and configuration options, such as argv0 and environ. From there, you can obtain a generic std.Io interface by calling evented.io(). To execute a task, use io.async(function, .{args}), then wait for its completion with future.await(io). Always remember to clean up resources by deferring task.cancel(io).

When you run the program using zig run, Zig automatically selects the appropriate backend for your system: io_uring for Linux or Grand Central Dispatch for macOS.

"With those caveats in mind, it seems we are indeed reaching the Promised Land, where Zig code can have Io implementations effortlessly swapped out."

– Andrew Kelley, Lead Developer, Zig Software Foundation

For quick local testing of standard library features, you can use the following command. It provides near-instant feedback:

zig build test-std -Dno-matrix --watch -fincremental

Conclusion

Zig 0.16 takes a bold step forward in concurrent programming with its userspace stack switching and swappable I/O backends. Unlike other languages that rely on async/await keywords or opaque schedulers, Zig introduces a fresh approach by treating I/O as an injectable dependency - similar to how it handles memory allocation with allocators. This design allows developers to use the std.Io interface to switch seamlessly between different execution models, such as threaded execution, evented backends (like io_uring on Linux or Grand Central Dispatch on macOS), or even single-threaded blocking, all without needing to rewrite application logic.

"With this last improvement Zig has completely defeated function coloring." - Loris Cro, Community Architect

One of Zig's standout features is its explicit distinction between io.async() and io.concurrent(). This clarity gives developers precise control over whether tasks run asynchronously on the same thread or in parallel, reducing the risk of tricky deadlocks caused by hidden scheduler behavior.

As of early 2026, the Zig 0.16.0 release milestone is 92% complete. While still experimental and facing challenges like performance regressions and incomplete error handling, Zig 0.16 lays a strong foundation. Its completion-based I/O models (e.g., io_uring), built-in cancellation semantics, and ability to adapt code to different platforms make it an attractive choice for systems programmers. The vision is simple yet powerful: write your I/O logic once, and let the caller decide how it runs - no fragmentation, just flexible backends tailored to your system's needs.

FAQs

When should I use std.Io.Evented vs std.Io.Threaded?

When building high-performance, event-driven applications that need scalable, non-blocking input/output operations, std.Io.Evented stands out as a solid choice. It leverages advanced mechanisms like io_uring on Linux and Grand Central Dispatch on macOS to efficiently handle a large number of concurrent operations. While it's still experimental, it’s particularly well-suited for scenarios where managing numerous simultaneous events is critical.

On the other hand, std.Io.Threaded is better suited for straightforward, thread-based I/O tasks. This approach works well for blocking operations or when dealing with legacy code. Since it’s more mature and stable, it’s a reliable option for simpler implementations or when compatibility is a priority.

How do fibers avoid async/await “function coloring” in Zig 0.16?

In Zig 0.16, fibers - also known as stackful coroutines or green threads - address the "function coloring" problem often encountered in async/await programming models. Function coloring forces developers to explicitly mark functions as asynchronous, which can make code more complex and harder to manage. Zig takes a different approach by using userspace stack switching with fibers. This allows the same code to seamlessly operate in either synchronous or asynchronous modes without requiring special syntax or annotations. The result? Async programming becomes much simpler, letting you write code that looks synchronous while still running asynchronously - no need to modify function signatures or add extra boilerplate.

What kernel or OS requirements are needed for io_uring or GCD backends?

For the io_uring backend on Linux, you'll need kernel version 6.11 or newer to take advantage of features like bind/listen and buffer management.

On macOS, the Grand Central Dispatch (GCD) backend works on most modern versions, typically macOS 10.15 (Catalina) or later.

Both backends are still experimental, so double-check that your system meets these requirements and supports the needed APIs.

.png)