Wasm brings near‑native speed to web apps; use Rust or AssemblyScript to offload CPU‑heavy tasks and run on the browser, edge, or serverless.

WebAssembly (Wasm) has become an essential tool for web development in 2026, offering near-native performance for tasks that JavaScript struggles with - like image processing, machine learning, and 3D rendering. Here's what you need to know:

- What is Wasm? A binary format that runs code fast and securely in browsers. It complements JavaScript, excelling in CPU-heavy tasks.

- Why use it? Wasm is 1.5× to 20× faster than JavaScript for compute-intensive tasks. It powers tools like Figma, Photoshop, and Google Earth, delivering desktop-level performance in the browser.

- Beyond the browser: Wasm is now widely used in serverless computing, edge platforms, and IoT due to its fast startup times (1–5 ms) and low resource usage.

- Ecosystem advancements: Features like WASI (system-level APIs), WasmGC (garbage collection), and SIMD (faster computations) make Wasm more powerful and accessible.

- Getting started: Use Rust or AssemblyScript to create Wasm modules. Tools like

wasm-packandwasm-bindgensimplify the process.

Wasm isn't here to replace JavaScript but to work alongside it, combining their strengths to build faster, more efficient web applications. Whether you're optimizing performance or exploring new deployment environments, Wasm is a game-changer for modern development.

WebAssembly Use Cases

Figma: High-Performance Design Tools

Figma transformed how design tools function in the browser by treating it more like a game engine than a static viewer. In June 2017, co-founder Evan Wallace spearheaded the migration of Figma's C++ rendering engine to WebAssembly (Wasm), which reduced the application's load time by 3×, regardless of document size.

The main hurdle was JavaScript's inherent limitations. Traditional frameworks rely on updating the state and re-rendering the entire DOM, which can be slow and inefficient. In contrast, Figma's Wasm-compiled C++ engine applies a transformation matrix update and instructs the GPU to shift the drawing - this process happens in microseconds. This allows Figma to manage millions of state changes while maintaining a steady 60 frames per second, even with complex, professional-grade design files.

"Because apps compiled to WebAssembly can run as fast as native apps, it has the potential to change the way software is written on the web." - Evan Wallace, Co-founder, Figma

By June 2025, Figma expanded its use of Wasm with "code layers" in Figma Sites. Using tools like esbuild and Tailwind v4 compiled to Wasm and executed inside Web Workers, Figma achieved a notable performance boost for real-time bundling and style compilation directly in the browser.

Other major applications, such as Google Earth and Photoshop, further highlight Wasm's ability to deliver native-level performance for demanding tasks.

Google Earth and Photoshop: Complex Applications in the Browser

Google Earth and Photoshop showcase WebAssembly's ability to bring extensive legacy C++ codebases to the web. Both applications rely on decades-old C++ foundations that would be impractical to rewrite entirely in JavaScript. With Wasm, these teams successfully brought desktop-level functionality to browsers without starting from scratch.

Photoshop's web version uses Wasm to handle 4K image processing and advanced filters. Tests reveal that processing a 4K image takes 180 ms in JavaScript, 22 ms with Rust-to-Wasm, and just 8 ms when leveraging SIMD instructions. This 7–8× speed improvement also removes the need to upload and download large files for server-side processing.

Google Earth employs Wasm to render intricate 3D geospatial data directly in the browser, a task previously limited to standalone desktop software. By using Wasm's direct memory access, it efficiently handles massive datasets, and SIMD instructions accelerate 3D coordinate transformations. The result? A browser experience that operates at 85–95% of native desktop performance for compute-intensive tasks.

These examples highlight how WebAssembly bridges the gap between traditional desktop software and high-performance web applications, enabling powerful capabilities directly in the browser.

sbb-itb-bfaad5b

WebAssembly Introduction - Getting Started with Wasm

The WebAssembly Ecosystem

By 2026, WebAssembly (Wasm) has grown into a versatile and robust ecosystem, thanks to key advancements like the WebAssembly System Interface (WASI) and the Component Model. These innovations have expanded Wasm's capabilities far beyond its browser origins, transforming how developers build and deploy applications.

WASI: WebAssembly System Interface

WASI, often described as the POSIX for WebAssembly, provides a standardized API set that allows Wasm modules to securely interact with the host operating system. Before WASI, WebAssembly was limited to a browser sandbox. Now, it enables modules to handle tasks like file I/O, TCP/UDP networking, HTTP requests, and even access system clocks or random number generators.

What sets WASI apart is its capability-based security model. Unlike traditional applications that have full OS access, WASI modules operate on the principle of "least privilege." This means they can only access resources - like specific directories or network sockets - that the host runtime explicitly permits. Plus, the same binary can run on multiple architectures (e.g., x86, ARM) and operating systems (Linux, Windows, macOS) without needing recompilation.

As of 2026, WASI Preview 2 is the stable version, offering standardized interfaces for essential operations like filesystem access, sockets, and HTTP. Production runtimes such as Wasmtime (general-purpose), Spin (microservices), and WasmEdge (edge computing and AI inference) are already leveraging WASI to power applications across servers, edge functions, and IoT devices.

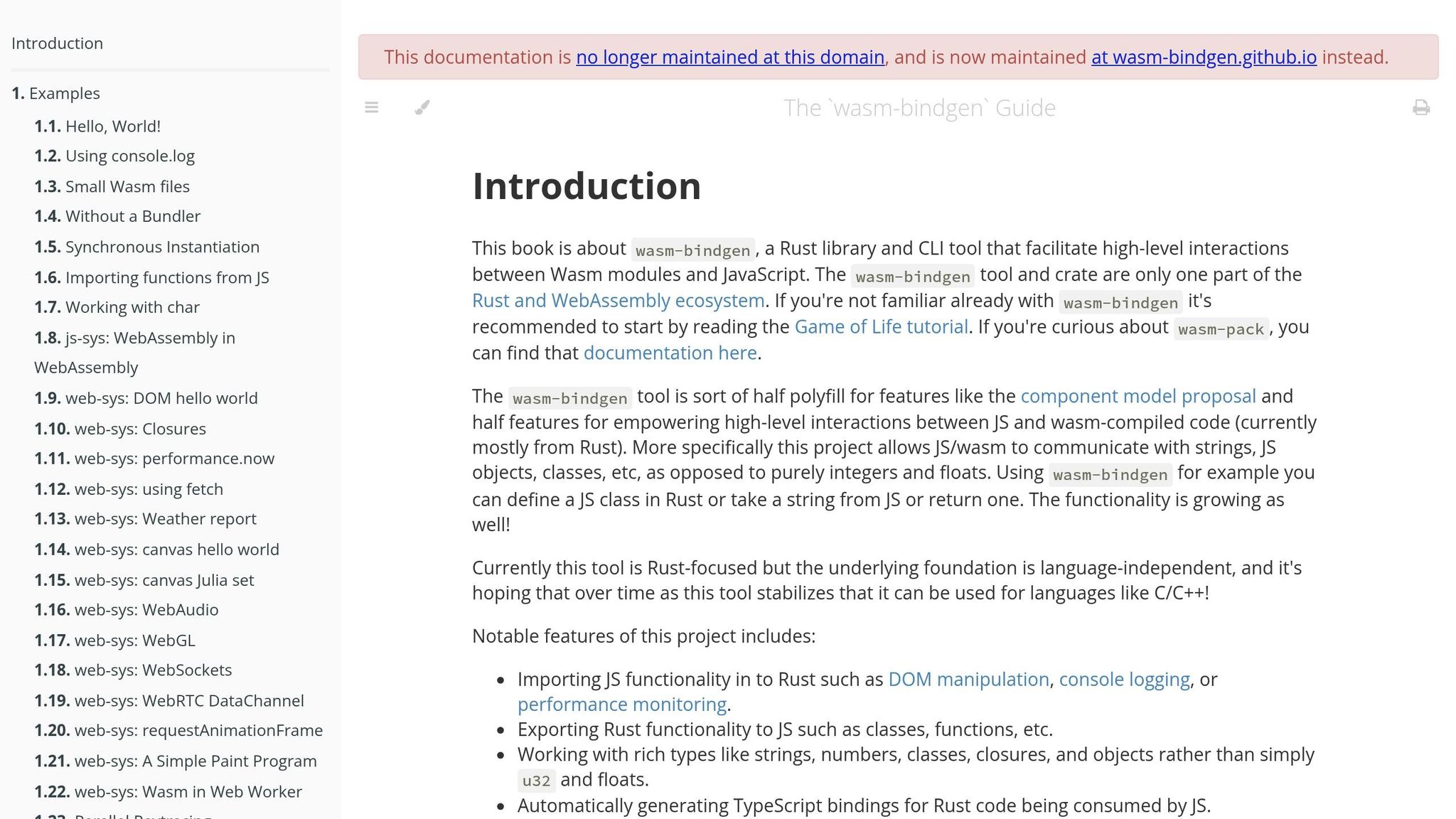

Component Model and wasm-bindgen

The Component Model is a game-changer for Wasm development, enabling developers to build applications using multiple Wasm modules written in different programming languages. Introduced in WASI 0.3.0 (February 2026), it also brought native support for asynchronous I/O using a futures-and-streams model.

While core WebAssembly supports only four numeric types, the Component Model uses WebAssembly Interface Types (WIT) to handle complex data types seamlessly. This eliminates the hassle of manual memory management. The Canonical ABI specification automates the process of "lifting" and "lowering" data types between a module's internal memory and the host environment. This even allows developers to combine a Rust library with a Go application in a single process, enabling shared memory and function calls without any network overhead.

For browser-based Wasm projects, Rust developers who have set up their development environment continue to rely on wasm-bindgen. This Rust library and CLI tool simplifies interoperability by generating JavaScript glue code, making Rust functions callable as native JavaScript functions. It handles type conversion (e.g., between UTF-8 and UTF-16), memory allocation across the Rust/JavaScript boundary, and provides access to browser Web APIs via the web-sys crate.

To streamline your workflow, use wasm-pack. This tool wraps wasm-bindgen and simplifies tasks like compiling, profiling, and generating npm-ready packages. For production builds, set opt-level = "z" and run wasm-opt to shrink binary sizes by 50% or more. For context, a basic Rust "Hello World" Wasm binary is about 15 KB, while a Go-based equivalent can be around 2.5 MB.

These advancements have made the WebAssembly ecosystem more accessible, efficient, and ready for real-world applications. Whether you're building for the web, servers, or edge devices, the tools and standards are here to support your next Wasm project.

Getting Started with WebAssembly

Now that you’ve got a handle on the WebAssembly (Wasm) ecosystem, it’s time to dive in and build your first module. This step-by-step guide will walk you through two popular approaches: Rust for those seeking maximum performance and control, and AssemblyScript, which offers a simpler path for developers familiar with TypeScript. Both paths will get you running Wasm in the browser, but the choice depends on your background and project needs.

Rust to Wasm: Step-by-Step Tutorial

If you’re ready to embrace Rust’s power, here’s how to get started:

-

Set up your environment: Install Rust using

rustup, then add the Wasm target with:

Next, installrustup target add wasm32-unknown-unknownwasm-packwith:cargo install wasm-pack -

Create your project: Generate a new library project by running:

In thecargo new --lib my-wasm-appCargo.tomlfile, add the following under[lib]to ensure the correct binary format:

Also, include[lib] crate-type = ["cdylib"]wasm-bindgenas a dependency. This library bridges Rust and JavaScript, making your functions callable from JS. -

Write your Rust code: In

src/lib.rs, create a simple function and decorate it with#[wasm_bindgen]to expose it to JavaScript. -

Build and deploy: Run the following command to compile your project:

This generates awasm-pack build --target webpkgdirectory containing your.wasmbinary and the JavaScript glue code. In your HTML file, import the module as an ES module:

Useimport init, { my_func } from './pkg/my_wasm_app.js';await init();to compile and initialize the module before calling your Rust functions. -

Optimize your binary: Add these lines to your

Cargo.tomlfor a smaller binary size:[profile.release] opt-level = "z" lto = true -

Improve debugging: Install the

console_error_panic_hookcrate to get clearer error messages during development.

If Rust feels a bit overwhelming, don’t worry - there’s an alternative that may suit TypeScript developers better.

AssemblyScript for TypeScript Developers

For those familiar with TypeScript, AssemblyScript offers a more approachable way to create WebAssembly modules. It uses a TypeScript-like syntax but enforces stricter typing and operates within Wasm’s constraints. Here’s why it’s worth considering:

- Familiar syntax: If you already know TypeScript, AssemblyScript feels natural and is great for prototyping or building performance-critical modules.

- Efficient memory use: While some JavaScript/TypeScript features aren’t supported, AssemblyScript’s optional garbage collection gives you more predictable performance.

- Small binaries: Output sizes are generally compact, often in the KB to low MB range.

To make the most of AssemblyScript:

- Focus on CPU-heavy tasks like image processing or math-intensive operations, using performance boosting tips for developers to streamline your code.

- Pass large buffers to Wasm functions instead of making frequent calls in tight loops.

- Use typed arrays for efficient, zero-copy data transfers between JavaScript and Wasm memory.

Comparing Rust, AssemblyScript, and Go

Here’s a quick comparison of Rust, AssemblyScript, and Go to help you decide which tool fits your needs:

| Feature | Rust | AssemblyScript | Go |

|---|---|---|---|

| Learning Curve | Steep | Low (for TS developers) | Moderate |

| Binary Size | Small (KB to MB) | Small (KB to MB) | Large (2.5 MB or more) |

| Garbage Collection | None | Optional | Yes |

| Best For | High performance, safety | TS developers, prototyping | Porting existing Go code |

With these tools in your arsenal, you’re ready to start integrating WebAssembly into your projects, tailoring your approach to match your expertise and goals.

WebAssembly Beyond the Browser

By 2026, WebAssembly (Wasm) has grown far beyond its browser-based origins, becoming a critical part of production systems in various environments. Today, Wasm powers edge computing, serverless platforms, and plugin systems, with thousands of deployments worldwide. Thanks to its fast startup times and efficient resource usage, Wasm is reshaping how edge computing and serverless architectures operate.

Wasm and Edge Computing

Edge computing thrives on speed, and Wasm delivers with cold start times as low as 1–5 milliseconds - about 100 times faster than traditional containers. A standout example comes from February 2026, when Cloudflare Workers used Wasm-based V8 isolates to deploy Llama-3-8b AI models across more than 330 global locations. By integrating speculative decoding into the Wasm runtime, they achieved 2–4x faster inference speeds while keeping cold starts under 5 milliseconds. Another example is Akamai EdgeWorkers, which adopted the Fermyon Spin framework across over 4,000 edge locations. This setup allowed developers to deploy Wasm-native microservices with built-in SQLite and key-value storage, all while maintaining near-instant function-call-level latency between components written in different languages.

In terms of AI performance, Wasm is a game-changer. It delivers AI inference speeds up to 8 times faster than TensorFlow.js, completing small model tasks in just 15 milliseconds. Meanwhile, runtimes like Wasmer 6.0 achieve 95% of native speed on Coremark benchmarks. The introduction of Memory64 in 2026 has further expanded Wasm's capabilities, enabling it to handle up to 16 exabytes of memory. This advancement allows large language models to run directly on edge nodes, eliminating the need to offload work to centralized servers.

Serverless Applications with Wasm

Wasm's lightweight design is also transforming serverless computing. Its near-instant startup and low resource consumption make it an ideal fit for serverless platforms. Unlike traditional containers, which often require at least 10MB of memory per instance, Wasm modules typically use just over 1MB. This efficiency allows for much higher deployment density on a single server. Platforms like Fastly Compute and Cloudflare Workers have embraced Wasm-first architectures, ensuring consistent performance across the globe.

In March 2026, Wasmer introduced Edge.js, a runtime enabling Node.js applications (compatible with v24) to run within a Wasm sandbox. This innovation allowed legacy Node.js workloads to achieve high-density deployment without Docker, operating at roughly 30% of native speed while maintaining full sandbox isolation using WASIX.

Security is another area where Wasm shines. Its capability-based security model uses a "deny-by-default" approach, meaning modules must explicitly request access to specific resources like files or network sockets. This makes Wasm one of the safest options for running untrusted third-party code in shared environments. IoT devices, for instance, are leveraging Wasm's security to push logic updates over-the-air without needing to replace entire firmware images.

JavaScript and WebAssembly Interoperability

JavaScript and WebAssembly work together by facilitating the exchange of data and control between their distinct environments. While Wasm offers impressive speed - running 10–20× faster than JavaScript for compute-intensive tasks - the challenge lies in bridging JavaScript's dynamic, garbage-collected nature with Wasm's static, linear memory structure.

Calling Wasm Functions from JavaScript

Calling WebAssembly functions from JavaScript becomes straightforward once you understand the process. The most efficient way to load and invoke Wasm modules is through streaming instantiation. This approach compiles the module during download, cutting initialization time by 30–50% compared to traditional methods. Here's how it works:

const importObject = {

env: {

log: (value) => console.log(value)

}

};

WebAssembly.instantiateStreaming(

fetch('calculator.wasm'),

importObject

).then(result => {

const { add, multiply } = result.instance.exports;

console.log(add(5, 3)); // 8

console.log(multiply(4, 7)); // 28

});

One important limitation: Wasm functions only accept four numeric types - i32, i64, f32, and f64. Strings, objects, and arrays can't be passed directly. Instead, you need to use Wasm's linear memory and rely on pointers.

For performance, avoid calling Wasm functions repeatedly in tight loops. Each call across the JavaScript-Wasm boundary adds about 100 nanoseconds of overhead. To optimize, batch data together, which can yield up to 10× better performance.

Now, let's look at how to handle more complex data exchanges.

Sharing Data Between JS and Wasm

Data sharing between JavaScript and Wasm relies on a shared ArrayBuffer that represents Wasm's linear memory. For strings and arrays, you write data to Wasm's memory using pointers and lengths, passing integers instead of the actual data to minimize overhead.

Handling strings requires extra care due to encoding differences: Wasm uses UTF-8 while JavaScript uses UTF-16. To bridge this gap, use TextEncoder and TextDecoder for encoding and decoding, which operate with linear O(n) complexity. Here's an example of a wrapper class for string processing:

class WasmStringProcessor {

constructor(wasmInstance) {

this.wasm = wasmInstance;

this.memory = new Uint8Array(wasmInstance.exports.memory.buffer);

this.encoder = new TextEncoder();

this.decoder = new TextDecoder();

}

processText(text) {

const encoded = this.encoder.encode(text);

const ptr = this.wasm.exports.allocate(encoded.length);

this.memory.set(encoded, ptr);

const resultPtr = this.wasm.exports.process(ptr, encoded.length);

this.wasm.exports.free(ptr);

return this.decoder.decode(this.memory.slice(resultPtr, resultPtr + 100));

}

}

Memory management is crucial. When Wasm memory expands using memory.grow(), all JavaScript typed array views tied to that memory become invalid and need to be recreated. To prevent memory leaks, track your allocations and include a free function in your Wasm code.

Looking ahead, tools like wasm-bindgen (for Rust) and AssemblyScript (for TypeScript) simplify much of this boilerplate. Additionally, emerging standards like the Component Model and Wasm Interface Type (WIT) are making it easier to handle complex data types across module boundaries.

"Crossing this boundary is never free. Every interaction has a price, and depending on the strategy you choose to pay it, that cost can range from mathematically negligible to a painful 'why on earth did I bother compiling this to WASM?'" - Rafael Calderon

WebAssembly vs JavaScript Performance

WebAssembly vs JavaScript Performance Comparison: Speed, Use Cases, and When to Use Each

When comparing WebAssembly (Wasm) and JavaScript, performance isn’t just about raw speed - it’s about understanding the task at hand and how the two interact. The real story unfolds when you consider the type of computation and the amount of data exchanged between JavaScript and Wasm.

Performance Metrics: Wasm vs JS

Wasm shines in compute-heavy tasks that handle large data sets without frequent back-and-forth communication with JavaScript. Performance can vary by browser. For instance, Chrome often sees 30–67% gains with Wasm, while Firefox can achieve up to 90% improvements in computationally intense scenarios. On lower-end Android devices, Wasm can run 60–160% faster than JavaScript.

Here’s a breakdown of how they compare across various tasks:

| Task Type | JavaScript | WebAssembly | Preferred |

|---|---|---|---|

| DOM Manipulation | Fast (direct access) | Slow (via JS glue) | JavaScript |

| JSON Parsing | Very fast (native) | Slower (copy overhead) | JavaScript |

| Image Resizing (4K) | ~250ms | ~45ms (5× faster) | WebAssembly |

| Physics Simulation | Frame drops at 1,000 entities | Smooth at 10,000 entities | WebAssembly |

| String Operations | Highly optimized | Slower (serialization needed) | JavaScript |

Startup time is another factor. JavaScript typically loads in 0–10ms, while Wasm modules require 15–80ms for JIT compilation and instantiation. For example, a 480KB Wasm binary added 60–80ms to the first meaningful paint, compared to just 15KB for a tree-shakeable JavaScript library.

These metrics provide a clear picture of when to choose Wasm over JavaScript.

When to Use WebAssembly vs JavaScript

So, how do you decide between Wasm and JavaScript? Use Wasm for CPU-heavy tasks that take more than 50ms, where JavaScript’s call overhead becomes noticeable. However, for functions under 2ms, Wasm’s overhead might outweigh its benefits.

JavaScript remains the go-to for tasks involving frequent DOM interactions, Web API calls, or string manipulation. Wasm relies on JavaScript "glue code" to access the DOM, which adds overhead. Studies suggest that eliminating this glue code could cut DOM operation time by 45%.

Wasm excels in scenarios requiring steady performance. Unlike JavaScript, which can experience garbage collection pauses or JIT warm-up delays, Wasm delivers consistent, near-native execution. This makes it ideal for applications like games, physics simulations, and real-time audio processing.

Batching data can also reduce overhead. For instance, decompressing large bundles with zstd dropped from 340ms in JavaScript to 80–90ms in Wasm.

"Rust plays a key role in modern JavaScript and TypeScript tooling and infrastructure. It addresses the most significant issue in the current tooling ecosystem: performance." - Stefan Baumgartner, Author of the TypeScript Cookbook

Before migrating to Wasm, profile your application. Transition only if your profiler identifies a computational bottleneck exceeding 5ms per call. For tasks like mathematical computations or image processing, targeting wasm32-simd128 can provide an extra 2–4× speed boost over standard Wasm.

WebAssembly Projects to Learn From

Digging into real-world WebAssembly (Wasm) projects can provide practical insights and techniques you can apply to your own work. These examples demonstrate efficient implementations and creative problem-solving with Wasm.

Vault-Tools, spearheaded by Antoine H. in February 2026, is a prime example of modular design. By dividing its Rust codebase into five separate crates (like pdf-tools and image-tools), it ensures that modules remain manageable in size - ranging from 200 KB to 2 MB - so users only download what they need. Impressively, the project compresses 10 MB PDF files in about 2 seconds. Antoine H. explained:

"The Wasm processing overhead is negligible compared to eliminated network latency."

To improve user experience, Vault-Tools employs a double requestAnimationFrame method to show loading spinners before Wasm calls block the main thread.

PicShift, an image converter built by indie developer BigByte in February 2026, showcases elegant format compatibility. This browser-based tool compiles encoders from C, C++, and Rust - such as MozJPEG and OxiPNG - into Wasm. For HEIC files, it uses a hybrid decoding strategy: tapping into native Safari 17.6+ APIs when available (yielding performance boosts of 17–39×) and falling back to Wasm decoders for other browsers. PicShift also produces JPEGs that are 10–15% smaller than standard browser encoders. Its dynamic Worker Pool scales based on the user's navigator.hardwareConcurrency, enabling efficient multi-core parallel processing.

Google Sheets made a significant move in December 2024 by transitioning its calculation worker to WebAssembly Garbage Collection (WasmGC). This shift allowed the app to utilize the browser's native garbage collector instead of bundling a custom runtime, leading to smaller module sizes and noticeable performance improvements.

For hands-on learning, tutorials like the Rust-Wasm Game of Life offer step-by-step guidance. This tutorial walks you through building, integrating, and packaging Wasm code using wasm-pack. Additionally, open-source projects like Photon (for high-speed image processing) and FFmpeg.wasm (for client-side video transcoding) provide excellent examples you can explore and adapt.

The Future of WebAssembly

WebAssembly continues to grow, with exciting developments on the horizon that build upon its already impressive ecosystem.

Garbage Collection and Multithreading

WebAssembly 3.0 introduces several important features, including WasmGC, Memory64, Relaxed SIMD, Tail Calls, and Typed References. Among these, WasmGC (Garbage Collection) stands out as a game-changer. Now stable in Chrome 119+, Firefox 120+, and Safari 18.2+, WasmGC enables managed languages like Kotlin, Dart, and Java to run more efficiently in the browser while slashing bundle sizes by 60–80%.

Another key milestone is JSPI (JavaScript Promise Integration), which reached Phase 4 in 2026. This feature allows synchronous WebAssembly code to seamlessly interact with asynchronous Web APIs without blocking the main thread. Meanwhile, Stack Switching, currently in Phase 3, is set to enhance support for coroutines, async/await patterns, and green threads directly within WebAssembly.

On the multithreading front, 2026 marks a significant collaboration between Microsoft and the Uno Platform to bring native multithreading support for .NET. These efforts build on existing Web Workers and shared memory capabilities, pushing WebAssembly closer to native performance while maintaining its web-first approach. Together, these updates solidify WebAssembly's position as a powerful tool for modern web development.

WebAssembly as a Universal Runtime

WebAssembly is steadily evolving into a universal binary format capable of running on virtually any platform. A major leap in this direction is the Component Model, a 2026 innovation that allows Wasm modules written in languages like Rust, Go, and Python to work together seamlessly. This model enables high-level type sharing for data like strings and records, simplifying cross-language integration.

Additionally, WASI (WebAssembly System Interface) is advancing toward version 1.0, expected by late 2026. WASI provides a standardized, POSIX-like API that lets WebAssembly interact with files, networks, and system clocks outside the browser.

The idea of WebAssembly as a universal runtime gained considerable traction in 2025 when Akamai, the largest CDN provider, acquired Fermyon to integrate WebAssembly-based serverless technology into its global edge network. This shift highlights some of WebAssembly's key advantages over traditional containers: modules start in just 1–5 milliseconds compared to 50–500 milliseconds for containers, and they require significantly less memory - around 1MB+ per module versus 10MB+ for containers. By early 2026, WebAssembly was already being used by 5.5% of websites visited by Chrome users.

These advancements underscore WebAssembly's growing role in shaping the future of both web and serverless computing.

Conclusion

By 2026, WebAssembly has become a practical tool for web developers, offering near-native performance for CPU-heavy tasks while preserving the web's hallmark traits of security and portability. For challenges like image processing, complex mathematical computations, or data parsing that can bog down the main thread, Wasm provides a noticeable performance boost over JavaScript in compute-intensive scenarios.

But its reach now extends far beyond the browser. With the WASI standard defining system-level interfaces and the Component Model enabling seamless cross-language integration, WebAssembly has found a home in edge computing, serverless functions, and IoT devices. Its lightning-fast startup times - ranging from 1 to 5 milliseconds - make it especially well-suited for serverless environments. This growing ecosystem offers developers a solid foundation for tackling performance challenges.

To get started, profile your application to identify a specific performance bottleneck. Once pinpointed, you can migrate that function to Wasm using Rust and wasm-pack, a well-established toolchain for web development. WebAssembly works hand-in-hand with JavaScript, handling raw computational tasks while JavaScript manages UI and I/O, creating a balanced and efficient workflow.

With WasmGC now stable across major browsers and ongoing advancements in the ecosystem, WebAssembly's potential continues to expand. Whether you're developing high-performance tools like Figma, intricate applications like Google Earth, or venturing into edge computing, WebAssembly offers a powerful solution for modern web development.

FAQs

Do I really need Wasm, or is JavaScript enough?

When deciding between WebAssembly (Wasm) and JavaScript, it all comes down to what you're trying to achieve. As of 2026, JavaScript remains the go-to choice for tasks like UI interactions, DOM manipulation, and general-purpose scripting. It handles the majority of web app needs with ease.

On the other hand, Wasm is your best bet for performance-intensive tasks. Think image processing, cryptography, or even physics simulations - areas where raw speed is essential. Wasm isn’t just limited to browsers either. It’s a key player in serverless functions, edge computing, and even plugin development.

For most standard web applications, JavaScript will do the job perfectly. But if you're working on something resource-heavy or need a solution that works seamlessly across platforms, Wasm is where it truly stands out.

What’s the easiest way to pass strings and arrays between JS and Wasm?

The simplest method to exchange strings and arrays between JavaScript and WebAssembly is by using wasm-bindgen. This tool streamlines the process of high-level interoperability, making it easier to work with data across the two environments. It efficiently manages conversions, allowing you to call functions that directly accept and return strings or arrays.

To make this work, annotate your Rust functions with #[wasm_bindgen]. Then, use compatible types like JsValue, String, or Uint8Array in JavaScript. This approach eliminates the need for manual memory management, ensuring smooth and hassle-free data transfer.

How do I keep my Wasm bundle small and fast to load?

If you're looking to streamline your WebAssembly (.wasm) files, focusing on build optimization and compression is key. Here’s how you can do it:

- Optimize Build Configurations: Enable Link Time Optimization (LTO) and set the optimization level to

"z", which prioritizes size reduction. - Use

wasm-opt: Tools likewasm-opt -Ozare excellent for shrinking your.wasmbinaries further.

Once your .wasm files are as compact as possible, compress them using gzip or Brotli during deployment. This step not only reduces file size but also improves load times, delivering a faster and smoother user experience.

.png)