How standardized questions, rubrics, and work-sample coding tests improve prediction, reduce bias, and speed developer hiring.

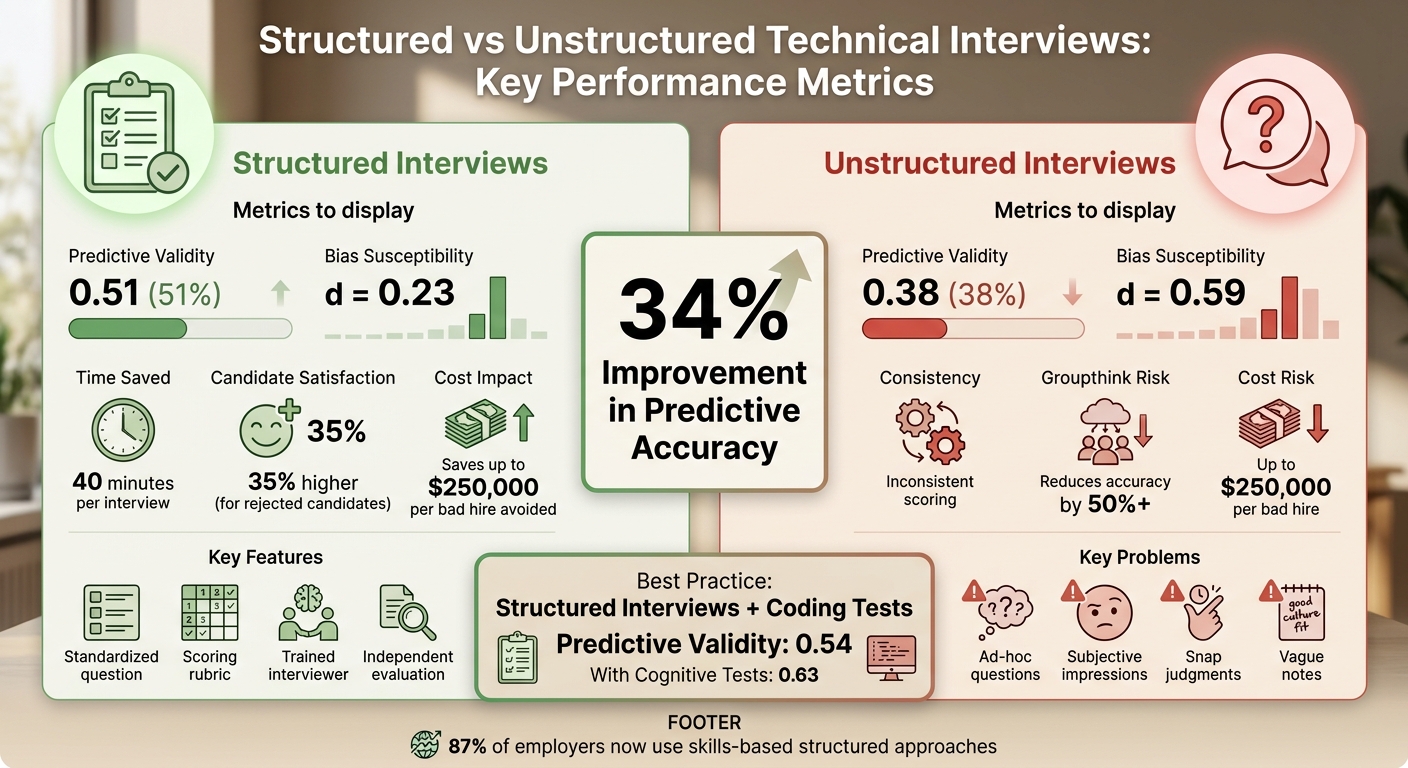

Structured technical interviews outperform unstructured ones in predicting developer performance. Research shows structured methods have a predictive validity of 0.51, compared to 0.38 for unstructured interviews - a 34% improvement. These interviews reduce bias in technical hiring, save time, and focus on job-relevant skills, offering a more reliable and fair hiring process.

Key takeaways:

- Predictive Accuracy: Structured interviews predict job success better (0.51 vs. 0.38).

- Bias Reduction: Bias susceptibility drops significantly (d = 0.23 vs. d = 0.59).

- Cost Savings: Avoiding bad hires can save up to $250,000 per hire.

- Candidate Satisfaction: Rejected candidates report 35% higher satisfaction.

- Efficiency: Saves 40 minutes per interview by eliminating unnecessary steps.

Structured interviews use standardized questions, scoring rubrics, and trained interviewers to ensure consistent evaluation. Adding practical coding tests aligned with job tasks further improves accuracy (predictive validity: 0.54). These methods help companies hire better talent while minimizing bias and inefficiencies.

::: @figure  {Structured vs Unstructured Technical Interviews: Performance Comparison}

{Structured vs Unstructured Technical Interviews: Performance Comparison}

Why Unstructured Interviews Fail

Unstructured interviews might feel natural, often resembling casual chats about past projects. But this relaxed style comes with serious drawbacks. Studies reveal that unstructured interviews have a predictive validity of just 0.38, meaning they only predict job performance correctly 38% of the time .

Poor Prediction of Job Performance

As Erik Bernhardsson, CTO at Better.com, explains:

"The correlation between who did really well in the interview process and who performs really well at work is really weak."

The consequences of a bad developer hire can be steep - up to $250,000 when factoring in recruitment costs, salary, and lost productivity . Harvard Business Review even states that "unstructured interviews are essentially worthless in forecasting job performance" . Why? Because these interviews often focus on irrelevant details like brain teasers, "gotcha" questions, or personality quirks that don’t reflect technical and non-technical job requirements . Isaac Lyman from Stack Overflow sums it up well:

"There's not a single person in the world who can assess someone's job skills in an unscripted five-minute conversation - or, for that matter, a much longer one."

Unstructured interviews fail to measure job-relevant skills effectively. Beyond their poor ability to predict performance, they also lead to inconsistent scoring and increase the risk of bias.

Inconsistent Scoring and Bias

Without a standardized format or scoring system, interviewers often evaluate candidates inconsistently. One interviewer might focus on technical jargon, while another places more value on interpersonal skills. This lack of uniformity can result in wildly different scores for the same candidate . Over time, interviewers may forget specifics and rely on vague impressions, which further undermines objective evaluation .

Unstructured interviews also leave the door wide open for unconscious bias. Interviewers often make snap judgments within the first few minutes and then unconsciously look for evidence to confirm those initial impressions . Research shows that unstructured interviews are over twice as prone to bias (d = 0.59) compared to structured interviews (d = 0.23) . In one study, interviewers confidently formed opinions even when candidates’ responses were completely random . Dr. Melissa Harrell, Hiring Effectiveness Expert at Google, highlights the advantage of structured approaches:

"Structured interviews are one of the best tools we have to identify the strongest job candidates. Not only that, they avoid the pitfalls of some of the other common methods."

Another issue is the lack of consistent record-keeping. Some interviewers take detailed notes, while others scribble vague comments like "good cultural fit." This inconsistency makes fair comparisons nearly impossible. During debriefs, dominant voices in the room can sway others, leading to groupthink and reducing hiring accuracy by more than 50% .

What Makes a Technical Interview Structured

Structured technical interviews address the gaps left by unstructured formats by introducing consistency through standardized questions, clear scoring systems, and trained interviewers. This approach transforms subjective judgments into measurable data, offering a better prediction of job performance - 0.51 compared to 0.38 for unstructured interviews .

Three key elements ensure fairness and consistency: candidates answer the same set of questions in the same order, responses are assessed using predefined scoring rubrics, and trained interviewers apply these rubrics uniformly. This ensures a "3" on the scale means the same quality of performance, no matter who evaluates it.

Standardized Questions for All Candidates

In structured interviews, every candidate for the same role is asked identical, job-related questions in the same sequence. This eliminates the risk of bias - no more tailoring questions based on someone’s background or alma mater . Instead, the focus stays on gathering relevant data that predicts actual job performance .

The questions themselves are crafted from a formal job analysis, which pinpoints 4–6 core skills or competencies needed for the role . For instance, a backend developer might be assessed on API design, debugging, database optimization, and code review. Instead of generic puzzles, candidates face targeted questions, such as:

- Behavioral questions: "Tell me about a time you optimized a slow database query."

- Situational questions: "How would you handle a production outage during a major release?" .

A great example of this in action is Slack’s 2020 overhaul of its hiring process. They introduced a structured work-sample test where developers performed a code review - something they would do regularly on the job. This change cut the time-to-hire for software engineers from over 200 days to just 83 .

This consistency sets the stage for objective evaluation, which is where scoring rubrics come into play.

Scoring Rubrics That Remove Guesswork

Scoring rubrics replace vague impressions like "good culture fit" with specific, measurable criteria tied to the required competencies . These rubrics often use behaviorally anchored rating scales (BARS), which outline what each score on a 1–5 scale represents. For example:

- A "5" for debugging might mean: "Provided a detailed example, used a systematic approach, and discussed preventative strategies."

- A "2" might mean: "Gave a vague answer with minimal explanation and limited skill demonstration" .

Jacob Price from Pin.com highlights the value of rubrics:

"A scoring rubric eliminates 'I liked her energy' and replaces it with documented, comparable evidence."

To avoid bias, interviewers score answers immediately after the interview and submit their evaluations independently, ensuring no one’s opinion is swayed by others . This process prevents issues like recency bias or anchoring bias, where a senior team member’s view might unduly influence the group.

Still, even the best rubrics are only as effective as the people applying them, which is why interviewer training is critical.

Trained Interviewers Who Apply Standards Consistently

To ensure consistency, interviewers need proper training. Before starting a hiring process, teams hold calibration sessions to align on what constitutes a "good" or "bad" answer for each question . These short sessions, often just 30 minutes, involve scoring mock answers together until everyone agrees on the rubric standards .

Calibration reduces discrepancies - one interviewer might score an answer a "4", while another gives it a "2" without alignment. Training also helps interviewers recognize and mitigate unconscious bias. When paired with diverse panels and blind scoring, structured interviews can reduce bias effects by up to 85% . In fact, structured interviews show much lower bias susceptibility (d = 0.23) compared to unstructured ones (d = 0.59) .

Beyond fairness, structured interviews save time. By eliminating the need for ad hoc questions and deliberations, they shave about 40 minutes off each interview . Candidates also notice the difference - those who go through structured interviews are 35% more satisfied with the process, even if they don’t get the job .

Designing Coding Tests That Mirror Real Work

Building on the foundation of structured technical interviews, practical coding tests bring an added layer of fairness and insight. The best coding tests focus on assessing actual coding skills, not rote memorization. Instead of asking candidates to solve abstract problems like reversing a binary tree on a whiteboard, consider tasks that reflect real job responsibilities - like triaging a bug report, parsing a JSON file, or reviewing a pull request. These work-sample tests are proven to be effective, with a predictive validity score of 0.54.

Work-Sample Tests vs. Algorithm Puzzles

Real-world coding tests are a game-changer when it comes to evaluating job readiness. Work-sample tests replicate real job tasks, allowing candidates to demonstrate their abilities in a realistic environment, complete with access to the tools and resources they’d use on the job. By contrast, algorithm puzzles often test academic knowledge or memory under unrealistic constraints, such as banning internet access.

A great example of this approach is GitHub's "Interview-bot", introduced in March 2022. Candidates are given a real GitHub repository containing a practical task. They work on the exercise using their own tools, submit a pull request, and the bot anonymizes their submission before an engineer evaluates it using a standardized rubric.

To make these tests fair and respectful of candidates' time, keep the tasks under three hours. Test the exercise internally with your own engineers first. If they need more than an hour to complete it, the task might be too demanding for a three-hour window.

Sample Scoring Matrix for Coding Tests

A well-defined scoring matrix helps eliminate bias by focusing on measurable outcomes. Here’s an example of how to assess key competencies in coding tests:

| Score | Code Quality | Problem Solving | Testing/Debugging |

|---|---|---|---|

| 5 - Exceptional | Clean, well-structured code that follows best practices and handles edge cases | Breaks down problems logically and uses data-driven solutions | Writes thorough tests and quickly identifies root causes |

| 3 - Adequate | Functional code with minor style issues | Solves the problem but misses opportunities for optimization | Includes basic tests and uses a methodical, though slower, debugging approach |

| 1 - Insufficient | Disorganized code that ignores conventions and fails edge cases | Struggles to break down the problem or relies on trial-and-error | No tests written and difficulty identifying or fixing bugs |

Use the results as a conversation starter, not a strict pass/fail decision. Encourage candidates to walk through their code, explain their decisions, and discuss what they’d improve with more time. This approach offers a deeper look into their problem-solving and engineering thought process.

How to Train and Calibrate Interviewers

Even the most thoughtfully designed rubric won't work if interviewers don't know how to use it. Training transforms a structured interview into a reliable hiring tool. Without proper training, interviewers might fall back on instinct, and scoring could become inconsistent as individuals interpret ratings like "3" or "5" differently over time.

Reducing Unconscious Bias Through Training

Bias training isn't about generic awareness - it’s about targeting specific biases that can skew technical evaluations. Focus on addressing issues like confirmation bias, the halo effect, and similarity bias. Teach interviewers to document exactly what a candidate says or does, rather than making subjective notes about personality or "vibe".

Take Yelp's Engineering team, for example. In May 2021, Director Kent Wills and Technical Recruiting Manager Grace Jiras Yuan noticed that men advanced more often than women, despite having identical coding performance. They tackled this by introducing a points-based rubric that required written explanations for any point deductions. This change not only closed the gender pass-rate gap but also improved diversity at the offer stage.

Another key strategy: Have interviewers submit their scores and justifications independently before any group discussion. This avoids "anchoring bias", where dominant voices in a group could sway others’ opinions. Structured interviews combined with independent scoring and diverse panels can reduce bias effects by up to 85%.

Calibration Sessions for Scoring Alignment

Calibration sessions ensure everyone on the team interprets scores consistently. Start by building a benchmark library with 3–5 anonymized examples of "clear pass", "borderline", and "clear fail" candidate submissions. During monthly 45-minute calibration meetings, have participants silently score the same benchmark submission before comparing their results. This method highlights differences in scoring styles and identifies who might be grading too strictly or leniently.

"The goal is not to find the most novel question. It's to create a repeatable work sample that produces comparable evidence across candidates and interviewers."

– Jacob Price, Pin

New interviewers should attend at least one calibration session before conducting interviews on their own. If disagreements persist about a specific competency, update the rubric’s behavioral anchors to clarify expectations. Regular calibration prevents "scoring drift" and keeps evaluations consistent as your team or roles change over time.

With a well-trained and calibrated team, you're ready to decide when to use panel interviews versus sequential formats.

Panel vs. Sequential Interview Formats

Once you’ve got trained and calibrated interviewers, the next step is picking the interview format that fits your hiring goals. Both panel and sequential interviews offer distinct advantages, so the choice depends on what you want to achieve and the resources your team has available.

When Panel Interviews Work Best

Panel interviews involve multiple interviewers meeting with the candidate at the same time. This format is ideal for smaller teams that need to reach a decision quickly and collaboratively . By having multiple evaluators in the room, this method helps reduce individual bias and increases the accuracy of predicting job performance .

"Interviews by committee... have been shown to make interviews more valid and predictive of future job performance."

– Qualified.io

To make panel interviews effective, assign specific roles to each participant. For example, one person can lead the questioning while others focus on taking detailed notes . At the end of the session, each panelist should independently submit their scores . Another advantage of this format is that it saves time for candidates - they only need to share their background once, rather than repeating it across multiple sessions.

When to Use Sequential Interviews

If you need to evaluate specific technical skills or competencies in detail, sequential interviews are a better fit. Unlike panel interviews, which provide a broad overview, sequential interviews allow for a focused, in-depth assessment of particular areas.

In this format, the candidate meets one-on-one with different interviewers, each focusing on a specific skill such as system design, coding, or domain expertise . This can be especially useful for senior-level positions where specialized knowledge or architectural experience needs to be thoroughly assessed . Candidates often feel less pressure in this setup since they’re not performing in front of a group.

That said, sequential interviews require more time - for both your team and the candidate . And there’s a risk of losing strong candidates, as 61% of job seekers tend to accept the first offer they receive . To avoid this, streamline the process by keeping your interview loop to no more than four stages and completing it within 7–10 days . This ensures you’re efficient without compromising on the quality of your evaluation.

Making Hiring Decisions from Data, Not Gut Feel

Once your team wraps up the interview loop, the real challenge begins: turning all those individual scores into a final hiring decision. This is where things can go off track if subjective opinions creep in. As Drew Whitehurst, Director of Marketing at interviewstream, explains:

"The structure built into the interview format falls apart if evaluation is left to individual judgment, informal notes, and memory" .

The solution? Aggregate scores and document evidence methodically. A data-driven hiring process ensures that every decision is based on data rather than gut instinct.

How to Combine Scores Across Interview Stages

Using a rubric-based scoring system, this step consolidates individual evaluations into a clear hiring decision. Stick to a consistent numeric scale - like 1 to 4 - across all interview stages . Independent scoring remains critical to avoid bias. For different roles, you can prioritize specific competencies. For instance, you must assess both technical and soft skills differently; a senior backend engineer may need top-notch system design skills, while a junior developer's ability to communicate and learn might take precedence . It's also helpful to set clear thresholds upfront, such as a "no-hire" decision if a candidate scores a 1 in any core area .

A practical tip: avoid half-points on your scale. A 1-4 system without decimals forces interviewers to make a clear decision about whether a candidate meets the bar . Additionally, finalize scores immediately after each interview. Waiting even a few hours can lead to memory fading and recency bias .

Running Evidence-Based Debrief Sessions

Once scores are compiled, debrief sessions help solidify the data-driven process. Start by displaying scores side-by-side so the team can quickly identify areas of agreement or disagreement . As Robert Ardell, Co-Founder and Strategic Advisor at KORE1, points out:

"If your post-interview discussion starts with 'I liked them' or 'I didn't get a good vibe,' you don't have a debrief process, you have a vote" .

Focus the conversation on documented evidence - specific quotes, observed behaviors, or trade-offs the candidate discussed . When there are significant differences in scores, like one interviewer giving a 2 and another a 4, use the debrief to dig into the reasoning by referring to the rubric . Avoid pushing the team toward consensus just for the sake of agreement. Instead, zero in on the competencies where opinions differ. Many teams now use automated scorecards that calculate a hire/no-hire recommendation based on rubric scores . This system minimizes reliance on personal feelings and keeps decisions anchored in the actual interview outcomes.

Answering Common Objections

Switching to structured interviews often faces resistance, especially from hiring managers who have long trusted their instincts. Two frequent objections - "We need to assess culture fit" and "Developers hate rigid processes" - lose their weight when you dig into the evidence.

Why "Culture Fit" Can Be Problematic

When hiring managers say a candidate "isn't a culture fit", this reasoning can often conceal unconscious bias. Research shows that vague "culture fit" judgments tend to amplify subjective impressions and confirmation bias. For example, unstructured interviews are linked to a bias susceptibility of d = .59, while structured interviews reduce this to d = .23 .

Tom Kenaley, Senior Partner at KORE1, cuts to the heart of the issue:

"'Culture fit' as an unscored interview dimension is where bias lives. Replace it with 'values alignment' and write behavioral questions for it" .

Instead of asking if someone would "grab a beer with the team", focus on behavioral questions tied to specific competencies. For instance, asking, "Tell me about a time you disagreed with a team decision but supported it anyway," allows you to measure collaboration in a concrete way. One technical staffing firm in Irvine, California, revamped its process using competency-based rubrics. A backend engineer, previously rejected three times for being "uncomfortable on camera" and "not a culture fit", was re-evaluated. Scoring 3.8 out of 4.0 across technical areas, they were hired within 48 hours and became a top performer over the next eleven months .

This shift to measurable, fair evaluations ensures candidates are judged on their skills through ethical tech recruitment practices - not vague perceptions.

Why Developers Appreciate Transparent and Objective Processes

Structured interviews don’t just improve accuracy; they also make the process less stressful for candidates. Developers rate structured interviews 21% fairer overall and 36% fairer in terms of assessment compared to unstructured formats . Jacob Price from Pin.com highlights this:

"The consistency felt fairer, because every candidate could see they were being measured on the same criteria as everyone else, and that transparency matters more to strong candidates than most hiring managers realize" .

Unstructured interviews, on the other hand, often leave room for irrelevant or even discriminatory questions - something 53% of job seekers report experiencing . By focusing on job-relevant criteria, structured interviews not only reduce anxiety but also create a level playing field where candidates are evaluated solely on their abilities.

Templates and Tools to Get Started

Templates and tools play a key role in maintaining the consistency and fairness required for structured technical interviews. Using reliable templates and tools can help you kick off your structured interview process efficiently.

Download Interview Templates and Rubrics

To begin, focus on three primary documents: a role competency matrix, a standardized question bank, and scoring rubrics. The competency matrix outlines 5–7 core skills - like problem decomposition, code quality, or system design - paired with behavioral anchors tailored to various seniority levels . The question bank should include carefully crafted behavioral questions and hypothetical scenarios tied to each competency . Scoring rubrics provide standardized scales, typically ranging from 1–4 or 1–5, with clear descriptions of what constitutes poor, average, and exceptional responses .

Another helpful resource is a benchmark library. This collection of anonymized past candidate submissions (e.g., clear pass, borderline, clear fail) allows you to train and calibrate new interviewers effectively . With the growing importance of AI skills, modern rubrics should also assess sub-competencies like effective prompting and debugging AI-generated code .

To manage these templates, many teams use tools like Notion, Google Sheets, and CoderPad. Notion is ideal for maintaining a centralized repository of matrices, rubrics, and benchmarks (free plans are available, with paid options for team permissions). Google Sheets is commonly used for capturing scorecards and logging evidence (included in Google Workspace). For technical assessments, platforms like CoderPad provide standardized coding and debugging exercises, with pricing based on team size .

Once you have these templates, the next step is tailoring them to your specific hiring needs.

How to Customize Templates for Your Roles

Start with the standard templates and adapt them to fit the unique demands of each role. Identify 4–6 key competencies for each role family - such as backend, frontend, or data engineering - by analyzing job descriptions and studying the traits of your top performers . Use this information to build a role competency matrix that defines expectations for each seniority level (e.g., L3, L4, L5) with detailed behavioral anchors .

For each competency, include clear, observable examples of what qualifies as a "poor", "solid", or "outstanding" response. Avoid vague descriptions and instead use specific language. For instance, write, "Candidate explained two specific trade-offs between SQL and NoSQL databases, citing latency and consistency concerns", instead of "Candidate seemed to understand databases" . Additionally, align work samples with the actual responsibilities of the role. For example, use a debugging exercise for roles focused on maintenance or a system design prompt for senior architects .

To ensure evaluations are evidence-based, add an "evidence" field to scorecards where interviewers can document specific quotes or observed behaviors . This prevents subjective judgments and ensures decisions are grounded in concrete examples. To further align your team, hold monthly 45-minute calibration sessions where everyone scores the same benchmark submission and discusses any scoring discrepancies .

"Structured interviews are one of the best tools we have to identify the strongest job candidates. Not only that, they avoid the pitfalls of some of the other common methods." – Dr. Melissa Harrell, Hiring Effectiveness Expert, Google

These templates are designed to adapt as your organization's hiring needs evolve, supporting research-backed practices that make structured interviews a reliable method for identifying top talent.

Conclusion

Structured technical interviews provide a dependable way to identify top developer talent. Pairing these interviews with cognitive ability tests increases their predictive accuracy to an impressive 0.63 .

Considering that a poor technical hire can cost a company an average of $17,000, implementing a skills-based hiring framework quickly proves its worth . Research from Google also highlights the benefits: structured processes save about 40 minutes per interview and improve satisfaction among rejected candidates by 35% .

Today, 87% of employers incorporate skills-based approaches during interviews, reflecting industry best practices . By standardizing questions, applying anchored rubrics, and ensuring interviewers score independently, companies can cut bias by as much as 85% . The case for structured hiring is not only compelling but also backed by tangible results.

FAQs

How do I run a job analysis to pick the right competencies?

To kick things off, perform a thorough job analysis to pinpoint the essential skills, knowledge, and behaviors required for the position. This step ensures that the competencies you evaluate are directly tied to what the role actually demands. Once identified, use these competencies to craft specific, targeted questions and develop clear scoring rubrics. Taking this structured route helps keep interviews focused, ensures fairness, and improves the ability to predict how well a candidate will perform on the job.

What’s the best way to set pass/fail thresholds from rubric scores?

The most effective way to establish pass/fail thresholds is by implementing a numerical scoring system that connects performance levels to specific scores. Start by creating a standardized rubric to evaluate each skill on a scale - say, 1 to 5. Then, set a minimum passing score that represents the required level of proficiency, such as a 3 or above. This method promotes clarity, minimizes bias, and relies on measurable data instead of subjective opinions.

How can small teams adopt structured interviews without slowing hiring?

Small teams can run structured interviews effectively by adopting simplified frameworks with standardized questions and scoring rubrics. Keeping each round focused on a few job-specific questions helps cut down the number of interview rounds. Creating an interview kit - complete with templates and rubrics - ensures consistency across interviews while reducing prep time for interviewers. This approach speeds up the hiring process, ensures fairness, and maintains accuracy in predicting candidate success.