Guide for technical recruiters on 2026 AI hiring rules: bias audits, consent, data retention, salary transparency, and human oversight.

In 2026, technical recruiters face stricter hiring laws, especially with the growing use of AI in recruitment. Key compliance updates include:

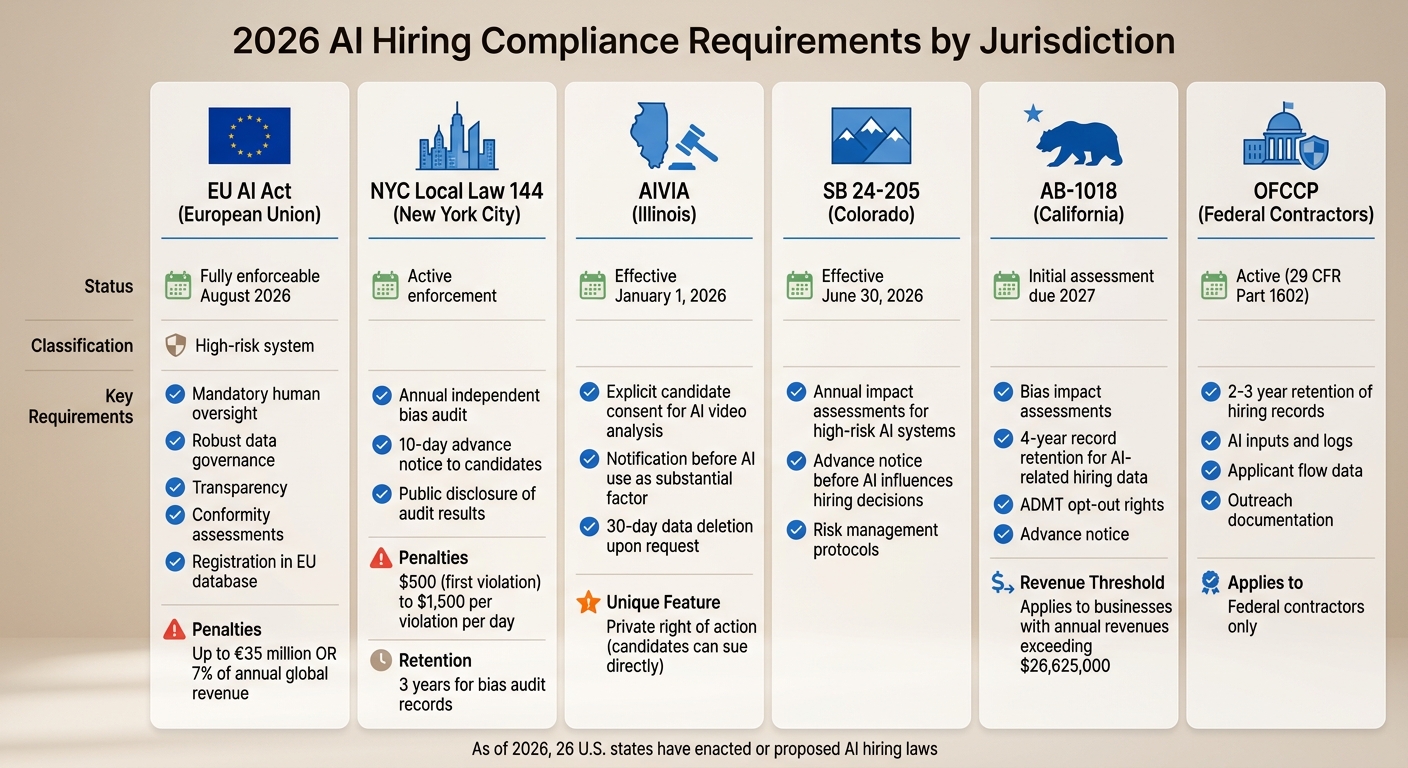

- AI Regulations: Tools for resume screening (such as AI resume parsing tools) and hiring are classified as "high-risk" under the EU AI Act, requiring human oversight and bias audits. U.S. states like New York, Illinois, and California now require bias audits, candidate notifications, and data retention.

- Bias Prevention: The "Four-Fifths Rule" is a benchmark for detecting discrimination. For example, AI advancing one group at significantly higher rates than another can lead to penalties.

- Data Privacy: GDPR, CCPA, and other laws mandate transparency, candidate consent, and strict data retention policies (e.g., California requires 4 years for AI-related hiring data).

- Salary Transparency: Many states now require salary ranges in job postings.

- Remote Hiring: International hires must comply with local laws, including privacy and wage regulations.

Failing to comply can lead to fines, lawsuits, and reputational damage. Recruiters must prioritize clear processes, human oversight, and transparency to stay aligned with these regulations.

Compliance Regulations for Technical Recruiters in 2026

::: @figure  {2026 AI Hiring Compliance Requirements by Jurisdiction}

{2026 AI Hiring Compliance Requirements by Jurisdiction}

As compliance rules continue to evolve, technical recruiters face a growing web of global, federal, and state regulations related to candidate data, AI screening, and hiring practices. By April 2026, at least 26 U.S. states have either enacted or proposed laws governing AI in hiring. Meanwhile, the EU AI Act enforces stringent controls on recruitment systems across Europe. Below, we break down the key data protection and bias prevention measures outlined in these regulations.

GDPR, CCPA, and EEOC Requirements

Under GDPR Article 22, candidates in the EU have the right to request manual review of decisions made by automated systems. The EU AI Act identifies recruitment AI as "high-risk", requiring transparency in tech hiring, robust data governance, and human oversight. Companies failing to comply face penalties of up to €35 million or 7% of their annual global revenue .

In California, businesses with annual revenues exceeding $26,625,000 (as of 2026) must comply with the CCPA/CPRA. These laws require clear notices before using automated decision-making tools, giving candidates the right to opt out of profiling for employment decisions. Notices must outline the AI's purpose, the data being used, and the candidate's right to request human review .

The EEOC enforces accountability for employers under Title VII, the ADA, and the ADEA, even when third-party AI tools are used. According to the EEOC, "Delegation to an algorithm does not transfer legal responsibility" . The Four-Fifths Rule remains a critical benchmark for detecting potential bias .

OFCCP Rules for Federal Contractors

Federal contractors hiring technical talent must adhere to OFCCP recordkeeping rules under 29 CFR Part 1602. This includes retaining hiring records, such as AI inputs and logs, for a minimum of two years. Contractors must also document outreach efforts and maintain applicant flow data to prove adherence to diversity in technical recruitment practices .

State Salary Transparency Laws

State-specific laws add further complexity. For example, NYC Local Law 144 requires an annual independent bias audit and mandates a 10-day advance notice before using automated employment decision tools (AEDTs). Non-compliance can result in daily fines ranging from $500 to $1,500 per violation .

Illinois' AIVIA law, effective January 1, 2026, requires candidate consent for AI-driven video analysis. It also gives candidates the right to sue employers directly for AI discrimination, bypassing the need to file a government complaint - making Illinois an outlier in this regard .

Colorado's SB 24-205, effective June 30, 2026, mandates annual impact assessments for high-risk AI systems and requires notices before AI influences hiring decisions . Similarly, California's AB-1018 requires bias impact assessments, along with a four-year record retention for AI-related hiring data. The first assessments are due by 2027 .

| Regulation | Jurisdiction | Key Requirement | 2026 Status |

|---|---|---|---|

| EU AI Act | European Union | High-risk classification; mandatory oversight | Fully enforceable Aug 2026 |

| NYC LL 144 | New York City | Annual bias audit; 10-day notice | Active enforcement |

| AIVIA (Amended) | Illinois | Candidate consent; private right of action | Effective Jan 1, 2026 |

| SB 24-205 | Colorado | Annual impact assessments; risk management | Effective June 30, 2026 |

| AB-1018 | California | Bias impact assessments; 4-year record retention | Initial assessment by 2027 |

| HB 1202 | Maryland | Consent for facial recognition in hiring | Active |

sbb-itb-d1e6221

What Technical Recruiters Need to Know Beyond General HR

Technical recruiting presents challenges that go beyond the usual scope of HR compliance. With remote work becoming the norm, 87% of employers are expected to fill at least 40% of roles with international candidates by 2024. This shift introduces a maze of privacy laws and employment regulations that vary across jurisdictions, creating complexities far beyond standard employment law .

The rise of "lean plus AI" hiring models has made every technical hire critical to business operations . Mistakes like misclassifying a developer or failing to verify credentials can result in wage claims, tax penalties, and regulatory scrutiny. To stay compliant, recruiters must follow rigorous protocols for classification, training, and verification.

Classifying Freelancers and Contractors Correctly

A major pitfall in technical recruiting is the misclassification of developers, which can lead to steep penalties related to wages, taxes, and benefits . The Fair Labor Standards Act (FLSA) governs key issues like overtime eligibility and whether a worker is exempt or non-exempt. On top of that, state-specific laws add another layer of complexity. For example, new wage laws were implemented in 22 U.S. states in 2026 .

When managing distributed technical teams, compliance hinges on the worker's location - not your company’s headquarters. A remote developer in California faces different overtime rules than one in Texas. To avoid missteps, track each worker’s jurisdiction and apply the correct classification standards. Clear documentation is essential. Factors like control over work hours, who provides tools, and how integrated a worker is into your team all play a role in determining whether they’re classified as a contractor or an employee.

"HR and employment regulations are becoming more complex every year, especially for organizations with growing or distributed workforces." - INFINITI HR

Anti-Harassment Training for Remote Technical Teams

For remote teams, anti-harassment training must meet the specific requirements of each state. These rules dictate how often training should occur, what it must cover, and how it should be documented. Tailoring your programs to meet these local standards is non-negotiable.

It’s also crucial to train technical interviewers on what not to ask during candidate evaluations. Topics like age, religion, national origin, and medical history are off-limits . With the increasing use of AI in hiring, recruiters need to understand system limitations and know when to override algorithmic decisions . Alarmingly, as of 2024, 67% of HR leaders were unaware of the specific AI hiring regulations in their jurisdiction .

"'The vendor said it was unbiased' is not a legal defense." - Michael Lansdowne Hauge, Pertama Partners

Keep comprehensive records of all training sessions and participant completions. These records are your best protection against claims of discrimination or harassment . As hiring regulations tighten, ensuring proper identity verification is another critical step.

I-9 Verification for Remote and International Hires

With the surge in remote work, verifying identity and credentials has become more critical than ever. Remote I-9 verification requires robust systems to confirm both identity and credentials. Technical recruiting is particularly vulnerable to fraud, making thorough verification a must at every stage - from application to offer .

For international hires, recruiters must navigate a patchwork of global privacy laws, including Indonesia's PDP Law and India's DPDP Act . Remote verification tools must comply not only with U.S. requirements but also with the data protection laws of the candidate's home country. Additionally, states like Rhode Island now require new-hire notices to indicate whether an employee is exempt from minimum wage or overtime, while Oregon mandates a written explanation of pay rates and deductions at hire .

To streamline compliance, standardize your onboarding process to include consistent I-9 and international verification steps across all jurisdictions . Use authorized technology for verification where legally permitted, ensuring that your methods align with the laws of both the worker’s location and your company’s headquarters.

How to Avoid Bias in Technical Screening

With compliance standards becoming stricter, creating ethical tech recruitment processes to avoid bias does more than meet legal obligations - it builds trust with candidates. Bias in technical hiring isn't just an ethical issue; it can lead to legal trouble. The Equal Employment Opportunity Commission (EEOC) applies the four-fifths rule to detect potential discrimination: if the hiring rate for any protected group is less than 80% of the rate for the highest-scoring group, your process could be flagged for disparate impact claims . As of 2026, technical recruiting faces heightened regulatory oversight, making structured and transparent practices a necessity.

The difficulty lies in the fact that seemingly neutral criteria can still result in discrimination. A 2021 study revealed that algorithmic tools for software developer roles disproportionately highlighted male candidates, even though gender preferences were not explicitly programmed into the system . Similarly, Amazon’s internal recruiting tool - discontinued in 2018 - downgraded resumes with terms like "women's" (e.g., "women's chess club") because it was trained on historically male-dominated applicant data . To reduce these risks, structured and standardized evaluation methods are crucial.

Using Structured Interviews and Standardized Rubrics

Every element of your technical evaluation rubric should directly connect to job performance. Vague criteria like "cultural fit" or "personality traits" are not only hard to validate but also increase the risk of bias . Instead, focus on measurable technical skills, such as debugging, system design, or API integration, that align with the job's requirements.

While AI tools can help rank candidates, human oversight remains critical. Hiring managers should make final decisions and have the authority to override automated recommendations, provided they document their reasoning . Training staff to interpret AI-generated scores, understand confidence intervals, and review borderline cases is essential .

Before launching any new rubric or screening tool, validate it rigorously to ensure it predicts job performance accurately. Run adverse impact tests using historical data to identify potential biases. By early 2024, fewer than 15% of NYC employers using automated hiring tools had published the required bias audit results . Don’t risk non-compliance - NYC Local Law 144 imposes fines ranging from $500 for a first violation to $1,500 for each additional daily violation .

"High overall accuracy in an AI hiring model is meaningless if error rates and selection rates differ sharply across demographic groups. Fairness requires disaggregated analysis, not just a single performance metric." - Pertama Partners

Analyzing selection rates across different demographic groups is critical. A tool might claim 90% overall accuracy but still systematically reject qualified candidates from protected groups. Alongside structured interviews, blind resume reviews can further reduce bias.

Implementing Blind Resume Review

Blind resume reviews remove identifying details - such as names, photos, graduation years, schools, ZIP codes, and employment gaps - that may unintentionally signal protected characteristics like race, age, or socioeconomic background .

Configure your Applicant Tracking System (ATS) to automatically redact this information before resumes are reviewed. This ensures that evaluations focus solely on job-relevant qualifications like programming expertise, years of experience, technical projects, and measurable accomplishments.

Avoid using ZIP codes in evaluations, as they often correlate with race and income levels . Similarly, refrain from giving undue weight to specific universities unless you can prove that graduates from those schools consistently outperform others in your technical roles. Be cautious about penalizing employment gaps, as this can unfairly disadvantage candidates with disabilities or caregiving responsibilities.

For roles based in NYC, inform candidates at least 10 business days before using automated screening tools and explain which job characteristics the tools assess . Also, provide alternative evaluation methods for candidates who request them .

"The vendor said it was unbiased is not a legal defense." - Michael Lansdowne Hauge, Pertama Partners

To ensure fairness, establish review checkpoints. For instance, have a human review a random 10% of AI-rejected candidates, require hiring managers to independently assess all recommended candidates, and conduct annual compliance audits . Additionally, demand independent bias audit reports from all third-party tools your company uses .

Managing Candidate Data Under Privacy Laws

Handling candidate data isn’t just about storing resumes - it’s about navigating a maze of legal responsibilities. For technical recruiters, this means juggling retention rules, deletion timelines, and consent requirements to avoid hefty penalties. In 2024 alone, AI-powered hiring tools processed over 30 million applications in the U.S. , and 99% of Fortune 500 companies now use AI to screen resumes . With this level of activity, staying compliant with data privacy laws isn’t optional - it’s essential.

The challenge? Privacy laws vary widely. For example, GDPR and CCPA emphasize data minimization and deletion rights, while federal regulations like the EEOC and OFCCP require employers to retain application records for one to three years . This creates a tricky balancing act: respecting a candidate’s right to be forgotten while meeting government audit requirements. To stay on track, configure systems to flag when data can be purged legally, while also adhering to retention mandates. Let’s break down retention and deletion rules in key jurisdictions.

Data Retention and Deletion Requirements

California raised the stakes in October 2025 by extending the required retention period for Automated Decision System (ADS) data from two years to four years . This includes everything from resumes and assessments to rankings and bias testing results. If you’re hiring in California or based there, your ATS needs to store this data for the full four years.

South Africa’s POPIA law takes a stricter approach: resumes cannot be stored indefinitely for AI training. Once the specific job a resume was collected for is filled, the data must be destroyed . Want to keep resumes for future roles? You’ll need explicit permission from each candidate. Meanwhile, New York City’s Local Law 144 requires bias audit records to be retained for three years .

Automating deletion alerts in your HR software can help you stay compliant . Without these, you risk deleting records too early - triggering EEOC audits - or keeping data too long, which could violate GDPR or CCPA. Also, ensure deletion requests are documented and processed within the legal timeframe (usually 30–45 days) in jurisdictions with “right to be forgotten” protections. Aligning these processes with consent tracking is just as important.

Getting and Tracking Candidate Consent

Managing candidate consent is a critical piece of the puzzle under GDPR, CCPA, and other state laws. For instance, NYC’s Local Law 144 requires employers to notify candidates at least 10 business days before using automated hiring tools .

California’s CPPA, effective January 1, 2026, adds another layer: businesses must notify candidates before using Automated Decision-Making Technology (ADMT) and offer an opt-out option . A clear way to do this is by adding an opt-out feature in your application portal, letting candidates request human review instead of automated assessments . In Illinois, explicit consent is mandatory for AI video interview analysis, and those videos must be deleted within 30 days of a candidate’s request .

Sensitive data should be isolated from standard personnel files. For example, California requires demographic data collected for pay reporting to be stored separately . When conducting background checks, the Fair Credit Reporting Act (FCRA) mandates a standalone written disclosure and authorization . To stay audit-ready, track consent details - including timestamps, the collection method (checkbox, signature, etc.), and the scope of permission - within your ATS.

| Jurisdiction | Retention Requirement | Key Consent Obligation |

|---|---|---|

| California | 4 years for ADS data | ADMT opt-out rights; advance notice |

| NYC | 3 years for bias audit records | 10-day advance notice for AI use |

| Illinois | Delete videos within 30 days | Explicit consent for AI video analysis |

| South Africa | Destroy data once vacancy is filled | Consent for automated processing |

| OFCCP (Federal) | 2–3 years depending on company size | Outreach and applicant flow logs |

"Where you're using AI technology, you can consistently have documentation on how the tool worked the way it should have worked versus relying on a hiring manager to take notes."

– Naz Scott, Chief Legal Officer, Hirevue

Review AI provider contracts carefully to ensure they take responsibility for discriminatory outcomes and provide independent bias audit results . Additionally, privacy frameworks often require notifying authorities within 72 hours of a data breach . Mishandling sensitive employee data, such as Social Security numbers, can result in federal fines of up to $1.5 million .

AI Hiring Regulations and Automated Screening Tools

Automated screening tools have become a staple in technical recruiting, with 62% of employers planning to use AI for most or all hiring stages by 2026 . However, evolving regulations are reshaping how these tools can be used. Laws like the EU AI Act, NYC Local Law 144, and Illinois amendments now demand annual bias audits, notifications to candidates, and clear human oversight.

Currently, only 29% of companies ensure human oversight on all AI-generated rejection decisions . Research from 2025 revealed a troubling statistic: AI resume screening tools favored names associated with white candidates in 85.1% of cases . As regulators crack down on such biases, non-compliance can lead to hefty fines - NYC penalties range from $500 to $1,500 per violation . Below is a breakdown of the key regulatory frameworks shaping AI-driven hiring practices.

EU AI Act Classifications for Hiring Tools

The EU AI Act, set to fully take effect in August 2026, classifies AI systems used for recruitment, CV screening, and candidate evaluations as "high-risk" . This classification imposes several requirements:

- Automated tools must undergo strict conformity assessments.

- Human oversight is mandatory, with the ability to override AI decisions.

- All high-risk systems must be registered in the EU database.

If you're hiring developers in or from the EU, your screening tools must comply with these regulations, no matter where your company is based.

"Biased AI tools are not eliminating human bias - they are merely laundering it through software."

– ACLU

Amazon's decision to scrap an AI tool between 2014 and 2018 due to its bias against resumes containing the word "women's" underscores the risks of opaque automated systems. The EU AI Act aims to prevent such outcomes by prioritizing transparency and accountability.

NYC Local Law 144 Bias Audit Rules

While the EU AI Act takes a broad approach to regulating high-risk AI systems, NYC Local Law 144 focuses on audit transparency and candidate rights. This law applies to any Automated Employment Decision Tool (AEDT) used for hiring or promotions in New York City, including remote roles linked to NYC offices . It establishes three main requirements:

- Independent Bias Audits: Companies must conduct annual bias audits through neutral, independent auditors. These audits assess disparate impacts based on sex, race, and ethnicity, using the "four-fifths rule" to evaluate selection rates .

- Public Transparency: Employers must post a summary of the most recent audit results on their careers page. This summary, including impact ratios and the tool's distribution date, must remain accessible for six months after the tool is retired .

- Candidate Notification: Candidates must be informed at least 10 business days before an AEDT is used. Notifications should outline the job traits being assessed and explain the candidate's right to request an alternative process .

A December 2025 audit by the New York State Comptroller revealed that 75% of test calls to the NYC 311 hotline about AEDT issues were misrouted . In response, the NYC Department of Consumer and Worker Protection has pledged stricter enforcement in 2026 .

"The Comptroller's criticism may intensify pressure on the DCWP to increase and improve its enforcement activities. Employers using AEDTs may face a more stringent approach to enforcement."

– DLA Piper

In one notable case, iTutorGroup settled an EEOC lawsuit for $365,000 in September 2023 after its AI recruitment system rejected over 200 qualified applicants. This settlement highlights the risks of non-compliance and the importance of NYC's bias audit rules .

Illinois AIPA Notification Requirements

Illinois led the way in AI hiring regulation with the 2020 Artificial Intelligence Video Interview Act (AIVIA) . Under this law, recruiters must notify candidates before video interviews that AI will be used to analyze their responses. The notice must explain how the AI works and specify which traits - like facial expressions or tone of voice - it evaluates . Additionally, explicit written consent must be obtained before proceeding .

Candidates also have the right to request deletion of their video recordings, which must be erased within 30 days of a written request. Starting January 1, 2026, amendments to the Illinois Human Rights Act will expand these requirements:

- Recruiters must notify candidates whenever AI is used as a "substantial factor" in hiring or promotion decisions, not just during video interviews .

- AI-driven discrimination - intentional or not - is banned, and candidates can sue employers directly for related violations .

In a notable case, CVS Health settled a lawsuit in Massachusetts for $365,000 after plaintiffs alleged that HireVue's AI-generated "employability scores" acted like a prohibited lie detector test .

"Delegation to an algorithm does not transfer legal responsibility."

– EEOC Technical Assistance Document

To stay compliant, companies should implement standalone consent workflows for AI screening, ensure vendors provide clear explanations of their technology, and establish protocols for deleting candidate data within 30 days of a request. Most importantly, a human recruiter should review AI-generated recommendations before making any final decisions .

| Regulation | Key Requirements | Penalties/Enforcement |

|---|---|---|

| EU AI Act | High-risk classification; conformity assessment; mandatory human oversight; registration in EU database | Potential market ban |

| NYC Local Law 144 | Annual independent bias audit; 10-day candidate notice; public disclosure of audit results | Fines ranging from $500 to $1,500 per violation |

| Illinois AIPA | Standalone consent; clear explanation of AI mechanics; 30-day data deletion | Private right of action (effective Jan 1, 2026) |

Technical Hiring Compliance Checklist

Review your hiring processes with this checklist to stay aligned with evolving multi-state regulations and federal guidelines. Drawing from earlier regulatory discussions, these steps offer practical ways to maintain compliance in technical hiring. According to a 2024 SHRM survey, 67% of HR leaders were unaware of the specific AI hiring regulations in their jurisdiction .

Job Posting and Salary Transparency

First, identify the jurisdiction of both your job postings and applicants, as developers favor recruitment processes that prioritize clarity and trust. Regulations like NYC Local Law 144 and Colorado's SB 24-205 apply based on where the candidate is located, not just your company headquarters . With changes to wage requirements and minimum wages rolling out across 22 states in 2026 , it's crucial to include the required salary details for each location in your job postings .

For companies operating in the EU, prepare for the EU Pay Transparency Directive, which takes effect in June 2026 . In states like Illinois and Colorado, you must notify candidates before using AI in hiring decisions and, in some cases, secure their written consent . Additionally, offer candidates the option to opt out of automated screening processes .

These measures establish a baseline for fair and transparent applicant evaluations.

Screening Tool Bias Audits

Next, ensure your screening tools provide unbiased results. Hire an independent auditor to examine these tools for potential disparities. Use the four-fifths rule as a benchmark: the selection rate for protected groups should be at least 80% of the highest selection rate .

To comply with NYC regulations, make bias audit summaries accessible on your company website or within job postings . Before deploying AI tools, confirm that vendors supply a completed bias audit and a compliant notification template . Screening tools should exclude factors like ZIP codes, graduation years, or names to avoid bias . Under NYC Local Law 144, non-compliance penalties range from $375 to $1,500 per violation per day .

Consent and Data Deletion Checks

Lastly, ensure candidate data protocols meet legal consent and deletion standards. Conduct an inventory of all systems used to evaluate candidates and confirm each includes proper consent mechanisms . Create a notice that explains what the AI system does, its purpose, and the types of data it processes to meet transparency requirements across jurisdictions .

Handle data deletion requests within the 30-day legal timeframe . While candidates have the right to request deletion, this must be balanced with legal retention requirements from California and the EEOC . Vendor agreements should include clauses guaranteeing transparency, ongoing bias testing, and clear procedures for managing data deletion requests .

How daily.dev Recruiter Handles Compliance

daily.dev Recruiter is built to align with 2026 compliance standards through a developer-first, consent-based developer platform. Here's how it works: when developers join the platform, they explicitly opt in and define their job preferences. Before any introduction happens, both the developer and the employer must confirm their interest. This ensures documented consent that meets GDPR's explicit consent requirements and CCPA's opt-in rules.

The platform also addresses the Illinois Artificial Intelligence Video Interview Act (AIVIA), which mandates that candidate consent must be "explicit and separate from general application consent" . Developers are fully informed about which companies want to connect with them and for which roles, giving them the choice to accept or decline each introduction. This explicit consent process forms the foundation of the entire hiring journey.

By requiring mutual interest before any screening, the platform reduces regulatory risks. It provides clear details about job qualifications and the data sources used in matching decisions, meeting transparency standards like those outlined in NYC Local Law 144 .

daily.dev Recruiter’s community-first approach fosters trust, something technical candidates increasingly expect. This shift is necessary because many current hiring processes are failing developers through vague roles and a lack of transparency. Research from 2026 indicates that candidates perceive AI-based evaluations as fairer than human assessments when the system is transparent and explainable . By showing developers how their profiles align with job requirements and giving them control at every step, the platform transforms compliance into a strength.

This consent-driven framework also adheres to global privacy laws, including India’s DPDP Act and South Africa’s POPIA, without requiring separate workflows for each jurisdiction . As a result, recruiters gain access to engaged talent while seamlessly meeting the explicit notice and consent standards required by current regulations. These efforts integrate smoothly with the broader compliance measures already in place.

Conclusion

In today's world of technical recruiting, staying compliant isn't just about avoiding discrimination - it's about actively demonstrating fairness. This means embracing practices like independent bias audits, securing explicit candidate consent, and providing transparent salary ranges.

The stakes are high. Non-compliance can lead to hefty fines and severe penalties. For instance, New York City enforces fines ranging from $500 to $1,500 per day . The EU AI Act can impose penalties up to €35 million or 7% of global revenue . Meanwhile, in Illinois, candidates now have the right to sue employers for AI-driven discrimination . Beyond these financial risks, candidates expect clarity about how their data is used and how hiring decisions are made.

Relying solely on vendor promises isn’t enough. Instead, take proactive steps: request independent third-party bias audits, use human-in-the-loop processes for borderline decisions, disclose salary ranges in job postings (a requirement in over 15 U.S. states ), notify candidates at least 10 business days before using AI tools , and ensure there are clear options for those who prefer human review .

Compliance isn't just a legal necessity - it’s a way to build trust with technical talent. Recruiters who treat compliance as a strategic advantage will not only meet legal requirements but also strengthen their talent pipelines. By weaving these measures into every stage of the hiring process, recruiters can earn trust and stand out in the competitive world of tech talent acquisition.

FAQs

Does the EU AI Act apply to U.S. recruiters?

The EU AI Act typically doesn't apply directly to U.S.-based recruiters unless they have operations or partnerships within the European Union. That said, it's crucial for companies using AI in hiring to familiarize themselves with the Act's regulations - especially if they operate globally or use AI tools developed in the EU. Beginning August 2, 2026, AI systems classified as high-risk and used for employment decisions will need to meet stringent compliance standards under this legislation.

What should we keep for AI hiring audits?

Maintaining thorough records is crucial when it comes to bias audits, impact assessments, candidate transparency efforts, vendor due diligence, and human oversight. Keeping these records updated ensures you're meeting the requirements of regulations such as NYC Local Law 144, the Illinois AI law, and the EU AI Act. These laws emphasize the importance of bias testing, evaluating potential impacts, and ensuring transparency in AI-driven hiring tools. Regular monitoring helps stay aligned with these standards and demonstrates accountability.

How do we prove human oversight of AI?

Proving that humans oversee AI in hiring means demonstrating that people actively review and validate what the AI produces. This can involve steps like documenting how AI results are checked against standardized rubrics, performing blind reviews to eliminate bias, and keeping detailed audit trails of decisions. Regulations, such as EEOC guidelines and various state laws, often demand clear policies that ensure human involvement, transparency in decision-making, and the ability for candidates to challenge AI-based outcomes.